最新要闻

- 三缸豪车 雷克萨斯最入门SUV车型LBX官图发布:用上1.5L混动-世界微速讯

- 腾讯FPS王炸大作!《无畏契约》国服客户端预载:6月8日终测 焦点

- 夏季风扇和空调到底谁更健康?看完明白了

- 2023年5月新能源车批发销量出炉:比亚迪能打10个长城

- 半藏森林克隆人暂下线:还在内测 名额已满_焦点简讯

- ppt应该保存什么格式

- 每日播报!高中最特别的时刻!高考前最后一个晚自习还记得多少?

- 哪吒CEO张勇:哪吒S是最漂亮的B级车 还是100万内最好的轿跑 热门

- 迪士尼一边涨价一边裁员:门票卖到799元

- 女子酒驾被查 喝了几瓶菠萝啤 以为是饮料 天天新资讯

- 天天热头条丨《小美人鱼》反派人类公主凡妮莎剧照出炉:比美人鱼颜值高、超甜

- ST华铁(000976)6月5日主力资金净买入651.43万元 全球热闻

- 西部矿业: 目前上半年尚未结束,公司暂时没有收到锂资源公司分红的消息_每日快讯

- 今日黄金td行情分析(2023年6月5日)

- 前沿资讯!净化器原厂/第三方滤芯对比:差距高下立判

- 网传北京将出台摩托车新规 京A禁止过户 多方回应

手机

iphone11大小尺寸是多少?苹果iPhone11和iPhone13的区别是什么?

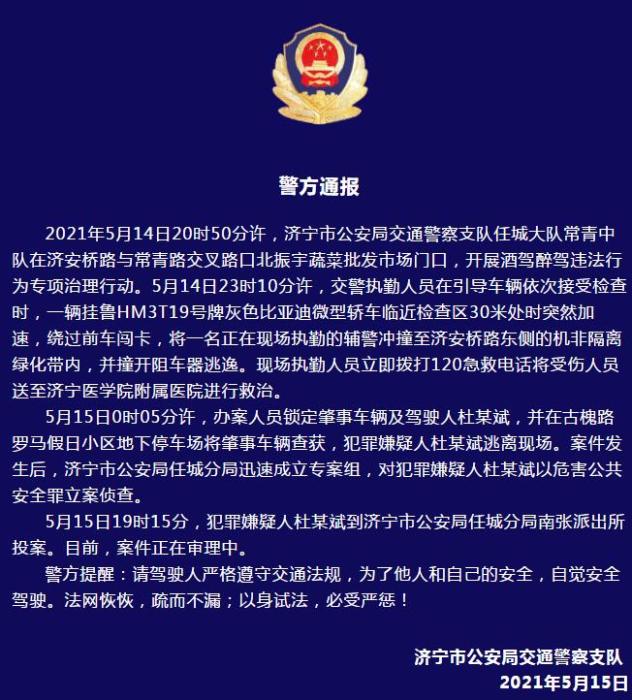

警方通报辅警执法直播中被撞飞:犯罪嫌疑人已投案

- iphone11大小尺寸是多少?苹果iPhone11和iPhone13的区别是什么?

- 警方通报辅警执法直播中被撞飞:犯罪嫌疑人已投案

- 男子被关545天申国赔:获赔18万多 驳回精神抚慰金

- 3天内26名本土感染者,辽宁确诊人数已超安徽

- 广西柳州一男子因纠纷杀害三人后自首

- 洱海坠机4名机组人员被批准为烈士 数千干部群众悼念

家电

【全球速看料】druid活跃线程数量持续增长问题

1、问题现象

前一阵子,在一个老项目里面加了一个接口,分页查询数据库里面的记录,用于前端展示。

(嗯,先别急,我要说的不是分页查询的性能导致的问题。)

需求很easy,三两下就搞定了,结果上线后过不了多久就收到告警druid活跃线程数量超过90%。

(资料图片仅供参考)

(资料图片仅供参考)

以前活跃线程数平均下来几乎为0,这次就加了一个分页查询接口,竟然导致活跃线程数量飙升?奇怪,我也没改特别的呀。

分页查询嘛,需要查询总记录数 + 当前页的记录。一般习惯用PageHelper之类的分页插件,但需要额外引入PageHelper这个jar,我看原来项目里已经有mybatis-paginator。想着还是少引入一个jar吧,就基于mybatis-paginator干吧。

2、问题分析

突然收到这个报警,也是觉得挺莫名其妙的,毕竟改动很小,就加了一个分页查询。

仔细确认了一下分页的代码,嗯,没啥毛病!

那咋弄呢?好在druid有monitor页面,先把monitor监控页面整出来,借助它来分析一下。

2.1、配置druid-monitor

首先,需要在web.xml里加上一些配置

DruidWebStatFilter com.alibaba.druid.support.http.WebStatFilter exclusions /static/*,*.js,*.gif,*.jpg,*.png,*.css,*.ico,/druid/* profileEnable true DruidWebStatFilter /* DruidStatView com.alibaba.druid.support.http.StatViewServlet loginUsername druid loginPassword druid DruidStatView /druid/* 然后,需要在DruidDataSource对象申明的地方,配置上

配置好之后,就可以打开druid-monitor页面了。没什么意外的话,就可以看到数据源、连接数之类的信息了。

没意外的话,意外发生了---页面是出来了,数据源信息死活没出来。

这是什么情况?难道是配置哪里不对?查找了各种配置说明之后,才发现这可能是druid的bug。升级一下druid就解决了。

于是把druid从1.0.12升级到 1.0.29,数据源tab页就正常了。

2.2、观察数据指标

通过druid-monitor,重点关注【数据源】页的三个参数:

- 活跃连接数

- 逻辑连接打开次数

- 逻辑连接关闭次数

在测试环境试了一段时间,发现有些时候逻辑连接打开数 略大于 关闭数,这说明有些连接确实没关闭了。只不过测试环境请求量比较小,问题不容易暴漏。

那有啥办法定位是哪里没关闭连接吗?

2.3、连接泄露检测

百度谷歌了下,找到了温少的wiki-连接泄露检测

... ... 当removeAbandoned=true之后,可以在内置监控界面datasource.html中的查看ActiveConnection StackTrace属性的,可以看到未关闭连接的具体堆栈信息,从而方便查出哪些连接泄漏了。

比如下面这样:

这些堆栈信息貌似也没啥特殊的,没有一行是项目的代码

java.lang.Thread.getStackTrace(Thread.java:1552)com.alibaba.druid.pool.DruidDataSource.getConnectionDirect(DruidDataSource.java:1140)com.alibaba.druid.filter.FilterChainImpl.dataSource_connect(FilterChainImpl.java:4544)com.alibaba.druid.filter.logging.LogFilter.dataSource_getConnection(LogFilter.java:854)com.alibaba.druid.filter.FilterChainImpl.dataSource_connect(FilterChainImpl.java:4540)com.alibaba.druid.filter.stat.StatFilter.dataSource_getConnection(StatFilter.java:670)com.alibaba.druid.filter.FilterChainImpl.dataSource_connect(FilterChainImpl.java:4540)com.alibaba.druid.filter.FilterAdapter.dataSource_getConnection(FilterAdapter.java:2723)com.alibaba.druid.filter.FilterChainImpl.dataSource_connect(FilterChainImpl.java:4540)com.alibaba.druid.pool.DruidDataSource.getConnection(DruidDataSource.java:1064)com.alibaba.druid.pool.DruidDataSource.getConnection(DruidDataSource.java:1056)com.alibaba.druid.pool.DruidDataSource.getConnection(DruidDataSource.java:104)org.springframework.jdbc.datasource.DataSourceUtils.doGetConnection(DataSourceUtils.java:111)org.springframework.jdbc.datasource.DataSourceUtils.getConnection(DataSourceUtils.java:77)org.mybatis.spring.transaction.SpringManagedTransaction.openConnection(SpringManagedTransaction.java:81)org.mybatis.spring.transaction.SpringManagedTransaction.getConnection(SpringManagedTransaction.java:67)org.apache.ibatis.executor.BaseExecutor.getConnection(BaseExecutor.java:315)org.apache.ibatis.executor.SimpleExecutor.prepareStatement(SimpleExecutor.java:75)org.apache.ibatis.executor.SimpleExecutor.doQuery(SimpleExecutor.java:61)org.apache.ibatis.executor.BaseExecutor.queryFromDatabase(BaseExecutor.java:303)org.apache.ibatis.executor.BaseExecutor.query(BaseExecutor.java:154)org.apache.ibatis.executor.CachingExecutor.query(CachingExecutor.java:102)org.apache.ibatis.executor.CachingExecutor.query(CachingExecutor.java:82)sun.reflect.GeneratedMethodAccessor300.invoke(Unknown Source)sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)java.lang.reflect.Method.invoke(Method.java:497)org.apache.ibatis.plugin.Invocation.proceed(Invocation.java:49)com.github.miemiedev.mybatis.paginator.OffsetLimitInterceptor$1.call(OffsetLimitInterceptor.java:87)com.github.miemiedev.mybatis.paginator.OffsetLimitInterceptor$1.call(OffsetLimitInterceptor.java:85)java.util.concurrent.FutureTask.run(FutureTask.java:266)java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1142)java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:617)java.lang.Thread.run(Thread.java:745)不过,多来几次之后,就发现一个类名出现的次数比较多----OffsetLimitInterceptor!难道是这玩意导致的?

OffsetLimitInterceptor是mybatis-paginator实现分页查询的类。

于是乎尝试不使用mybatis-paginator,而是换了最原始的分页查询。结果问题解决了~

是的,就这么解决了! 这谁能想的到!

3、原因分析

前面猜测是mybatis-paginator的OffsetLimitInterceptor引起的,没想到蒙对了。改了之后问题就消失了。

那来看看OffsetLimitInterceptor到底做了什么

@Intercepts({@Signature(type= Executor.class,method = "query",args = {MappedStatement.class, Object.class, RowBounds.class, ResultHandler.class})})public class OffsetLimitInterceptor implements Interceptor{ private static Logger logger = LoggerFactory.getLogger(OffsetLimitInterceptor.class);static int MAPPED_STATEMENT_INDEX = 0;static int PARAMETER_INDEX = 1;static int ROWBOUNDS_INDEX = 2;static int RESULT_HANDLER_INDEX = 3; static ExecutorService Pool; String dialectClass; boolean asyncTotalCount = false;public Object intercept(final Invocation invocation) throws Throwable { final Executor executor = (Executor) invocation.getTarget(); final Object[] queryArgs = invocation.getArgs(); final MappedStatement ms = (MappedStatement)queryArgs[MAPPED_STATEMENT_INDEX]; final Object parameter = queryArgs[PARAMETER_INDEX]; final RowBounds rowBounds = (RowBounds)queryArgs[ROWBOUNDS_INDEX]; final PageBounds pageBounds = new PageBounds(rowBounds); if(pageBounds.getOffset() == RowBounds.NO_ROW_OFFSET && pageBounds.getLimit() == RowBounds.NO_ROW_LIMIT && pageBounds.getOrders().isEmpty()){ return invocation.proceed(); } final Dialect dialect; try { Class clazz = Class.forName(dialectClass); Constructor constructor = clazz.getConstructor(MappedStatement.class, Object.class, PageBounds.class); dialect = (Dialect)constructor.newInstance(new Object[]{ms, parameter, pageBounds}); } catch (Exception e) { throw new ClassNotFoundException("Cannot create dialect instance: "+dialectClass,e); } final BoundSql boundSql = ms.getBoundSql(parameter); queryArgs[MAPPED_STATEMENT_INDEX] = copyFromNewSql(ms,boundSql,dialect.getPageSQL(), dialect.getParameterMappings(), dialect.getParameterObject()); queryArgs[PARAMETER_INDEX] = dialect.getParameterObject(); queryArgs[ROWBOUNDS_INDEX] = new RowBounds(RowBounds.NO_ROW_OFFSET,RowBounds.NO_ROW_LIMIT); Boolean async = pageBounds.getAsyncTotalCount() == null ? asyncTotalCount : pageBounds.getAsyncTotalCount(); // 查记录 Future listFuture = call(new Callable() { public List call() throws Exception { return (List)invocation.proceed(); } }, async); // 查总数 if(pageBounds.isContainsTotalCount()){ Callable countTask = new Callable() { public Object call() throws Exception { Integer count; Cache cache = ms.getCache(); if(cache != null && ms.isUseCache() && ms.getConfiguration().isCacheEnabled()){ CacheKey cacheKey = executor.createCacheKey(ms,parameter,new PageBounds(),copyFromBoundSql(ms,boundSql,dialect.getCountSQL(), boundSql.getParameterMappings(), boundSql.getParameterObject())); count = (Integer)cache.getObject(cacheKey); if(count == null){ count = SQLHelp.getCount(ms,executor.getTransaction(),parameter,boundSql,dialect); cache.putObject(cacheKey, count); } }else{ count = SQLHelp.getCount(ms,executor.getTransaction(),parameter,boundSql,dialect); } return new Paginator(pageBounds.getPage(), pageBounds.getLimit(), count); } }; Future countFutrue = call(countTask, async); return new PageList(listFuture.get(),countFutrue.get()); } return listFuture.get();} // 这里可以同步 or 异步 去查 private Future call(Callable callable, boolean async){ if(async){ return Pool.submit(callable); }else{ FutureTask future = new FutureTask(callable); future.run(); return future; } } // ......}

从源码看,这里支持同步或者异步去执行2个sql。然后通过Future.get()返回结果。

异步使用的pool线程池是

public void setPoolMaxSize(int poolMaxSize) { if(poolMaxSize > 0){ logger.debug("poolMaxSize: {} ", poolMaxSize); Pool = Executors.newFixedThreadPool(poolMaxSize); }else{ Pool = Executors.newCachedThreadPool(); }}至此,问题应该比较清楚了。Pool连接池线程终止后,数据库连接未释放,导致活跃数持续增长。

(为啥使用这个Pool线程池就会出现数据库连接未回收问题呢?我跟着源码 也没找到答案,o(╥﹏╥)o)

看网上很多类似的问题,但最后都是配置removeAbandoned=true就完了。但

RemoveAbandanded功能不建议在生产环境中使用,仅用于连接泄露检测诊断

4、其他

1、为啥以前就没出问题呢?

项目里面的mybatis.xml中配置的是:

asyncTotalCount=false,那为啥实际情况是使用的Pool线程池去异步执行的呢?

原来是依赖的一个二方包里面,包含了一个mybatis的配置文件,里面配置的asyncTotalCount为true,而且它先被先一步加载!

2、druid为什么不打印日志?

druid不依赖任何的log组件,但支持多种log组件,会检测当前环境,选择一种合适的log实现。选择日志的优先级依次是:

log4j > log4j2 > slf4j > commons-logging > jdk-logging

具体检测方法是尝试加载对应的class,如果加载成功就认为当前环境支持此种log组件,就使用它来处理日志打印。

如果想要指定特定的log组件,你可以通过JVM启动参数来配置,比如:

-Ddruid.logType=slf4j-Ddruid.logType=log4j-Ddruid.logType=log4j2-Ddruid.logType=commonsLog-Ddruid.logType=jdkLog源码见:

// com.alibaba.druid.support.logging.LogFactorypublic class LogFactory { private static Constructor logConstructor; static { String logType= System.getProperty("druid.logType"); if(logType != null){ if(logType.equalsIgnoreCase("slf4j")){ tryImplementation("org.slf4j.Logger", "com.alibaba.druid.support.logging.SLF4JImpl"); }else if(logType.equalsIgnoreCase("log4j")){ tryImplementation("org.apache.log4j.Logger", "com.alibaba.druid.support.logging.Log4jImpl"); }else if(logType.equalsIgnoreCase("log4j2")){ tryImplementation("org.apache.logging.log4j.Logger", "com.alibaba.druid.support.logging.Log4j2Impl"); }else if(logType.equalsIgnoreCase("commonsLog")){ tryImplementation("org.apache.commons.logging.LogFactory", "com.alibaba.druid.support.logging.JakartaCommonsLoggingImpl"); }else if(logType.equalsIgnoreCase("jdkLog")){ tryImplementation("java.util.logging.Logger", "com.alibaba.druid.support.logging.Jdk14LoggingImpl"); } } // 优先选择log4j,而非Apache Common Logging. 因为后者无法设置真实Log调用者的信息 tryImplementation("org.apache.log4j.Logger", "com.alibaba.druid.support.logging.Log4jImpl"); tryImplementation("org.apache.logging.log4j.Logger", "com.alibaba.druid.support.logging.Log4j2Impl"); tryImplementation("org.slf4j.Logger", "com.alibaba.druid.support.logging.SLF4JImpl"); tryImplementation("org.apache.commons.logging.LogFactory", "com.alibaba.druid.support.logging.JakartaCommonsLoggingImpl"); tryImplementation("java.util.logging.Logger", "com.alibaba.druid.support.logging.Jdk14LoggingImpl"); if (logConstructor == null) { try { logConstructor = NoLoggingImpl.class.getConstructor(String.class); } catch (Exception e) { throw new IllegalStateException(e.getMessage(), e); } } } // ...}配置上启动参数后【-Ddruid.logType=slf4j】,有连接未正常关闭,就会打印如下日志:

2023-05-25 10:19:49.153 [TID:] [trace ] [userName ] [eid ] [Druid-ConnectionPool-Destroy-1392407412] ERROR com.alibaba.druid.pool.DruidDataSource - abandon connection, owner thread: pool-1-thread-2, connected at : 1684980819318, open stackTraceat java.lang.Thread.getStackTrace(Thread.java:1559)at com.alibaba.druid.pool.DruidDataSource.getConnectionDirect(DruidDataSource.java:1140)at com.alibaba.druid.filter.FilterChainImpl.dataSource_connect(FilterChainImpl.java:4544)at com.alibaba.druid.filter.logging.LogFilter.dataSource_getConnection(LogFilter.java:854)at com.alibaba.druid.filter.FilterChainImpl.dataSource_connect(FilterChainImpl.java:4540)at com.alibaba.druid.filter.stat.StatFilter.dataSource_getConnection(StatFilter.java:670)at com.alibaba.druid.filter.FilterChainImpl.dataSource_connect(FilterChainImpl.java:4540)at com.alibaba.druid.filter.FilterAdapter.dataSource_getConnection(FilterAdapter.java:2723)at com.alibaba.druid.filter.FilterChainImpl.dataSource_connect(FilterChainImpl.java:4540)at com.alibaba.druid.pool.DruidDataSource.getConnection(DruidDataSource.java:1064)at com.alibaba.druid.pool.DruidDataSource.getConnection(DruidDataSource.java:1056)at com.alibaba.druid.pool.DruidDataSource.getConnection(DruidDataSource.java:104)at org.springframework.jdbc.datasource.DataSourceUtils.doGetConnection(DataSourceUtils.java:111)at org.springframework.jdbc.datasource.DataSourceUtils.getConnection(DataSourceUtils.java:77)at org.mybatis.spring.transaction.SpringManagedTransaction.openConnection(SpringManagedTransaction.java:81)at org.mybatis.spring.transaction.SpringManagedTransaction.getConnection(SpringManagedTransaction.java:67)at com.github.miemiedev.mybatis.paginator.support.SQLHelp.getCount(SQLHelp.java:56)at com.github.miemiedev.mybatis.paginator.OffsetLimitInterceptor$2.call(OffsetLimitInterceptor.java:105)at java.util.concurrent.FutureTask.run$$$capture(FutureTask.java:266)at java.util.concurrent.FutureTask.run(FutureTask.java)at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1142)at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:617)at java.lang.Thread.run(Thread.java:748)ownerThread current state is TERMINATED, current stackTrace关键词:

-

-

-

-

【全球速看料】druid活跃线程数量持续增长问题

世界快报:数据的完整性是企业数字化转型的基础

近万条儿童科普知识大全ACCESS\EXCEL数据库

三缸豪车 雷克萨斯最入门SUV车型LBX官图发布:用上1.5L混动-世界微速讯

腾讯FPS王炸大作!《无畏契约》国服客户端预载:6月8日终测 焦点

夏季风扇和空调到底谁更健康?看完明白了

2023年5月新能源车批发销量出炉:比亚迪能打10个长城

半藏森林克隆人暂下线:还在内测 名额已满_焦点简讯

Java虚拟线程

9千多少儿百科全书百科知识ACCESS\EXCEL数据库|天天看热讯

环球速讯:【解决方法】loopback口添加同目的网段的地址后,无法用默认路由ping通该网段地址

ppt应该保存什么格式

【环球财经】日经股指涨破32000点 为1990年7月以来首次

每日播报!高中最特别的时刻!高考前最后一个晚自习还记得多少?

哪吒CEO张勇:哪吒S是最漂亮的B级车 还是100万内最好的轿跑 热门

迪士尼一边涨价一边裁员:门票卖到799元

女子酒驾被查 喝了几瓶菠萝啤 以为是饮料 天天新资讯

天天热头条丨《小美人鱼》反派人类公主凡妮莎剧照出炉:比美人鱼颜值高、超甜

ST华铁(000976)6月5日主力资金净买入651.43万元 全球热闻

ChatGLM-6B int4的本地部署与初步测试

Ambient Mesh:Istio 数据面新模式

某OA 11.10 未授权任意文件上传

判断非String对象是否为null,小伙竟然用StringUtils.isEmpty(obj+"")

全球负收益率债券规模反弹至近2万亿美元

西部矿业: 目前上半年尚未结束,公司暂时没有收到锂资源公司分红的消息_每日快讯

今日黄金td行情分析(2023年6月5日)

前沿资讯!净化器原厂/第三方滤芯对比:差距高下立判

网传北京将出台摩托车新规 京A禁止过户 多方回应

《暗黑4》玩家希望新角色继承地图数据:重新跑图太无聊 实时焦点

芯片投入决心绝不动摇!小米自研芯片公司玄戒增资至19.2亿 全球通讯

大众电动车高速收费站撞车爆燃致四人死亡 现场视频曝光:毫无减速_当前快看

MySQL的数据目录(Linux)

数据万象 | AIGC 存储内容安全解决方案

每日看点!Java SPI概念、实现原理、优缺点、应用场景、使用步骤、实战SPI案例

世界短讯!让人欣慰?老外呼吁热门IP都应改为黑人:受社会尊重 改变低人一等形象

视焦点讯!杭州一居民家门口地面有80℃ 热到烫脚:挖2小时后找到原因 网友称好险

.NET使用System.Speech轻松读取文本

环球快报:2023年最新辽宁省直住房公积金缴费基数是多少

新款Mac Pro现身跑分平台:M2 Ultra处理器 频率3.68 GHz

1.4kg重量就做到900ANSI流明!哈趣H2投影仪评测:旅行能用充电宝供电|每日报道

关注:不如隔壁New Bing:谷歌搜索AI功能被吐槽反应太慢

当前速讯:2023高考即将来临 应该怎么吃?专家科普:避免5个忌讳

50年前美国登月真的造假吗?日本将第三次挑战登月 证实可能性

苹果(AAPL.US)本周将举办开发者大会 首款头显终将亮相 环球快播报

2023陕西省大学生信息安全竞赛web writeup

世界速看:MySQL字符集与校对集规则说明

Linux环境安装JDK-焦点精选

取代手机不是吹!Win11原生运行安卓APP体验大升级:便捷丝滑-全球资讯

全球快看点丨乘客雨中候车 高铁站台为什么不能打伞上热搜:官方说明

两位国人男子放弃登顶珠峰花1万美元救人 获救女子只愿承担4成救援费 天天新消息

小鹏G6官宣神秘代言人:网友纷纷猜是林志颖! 环球资讯

世界今日讯!全脂/低脂可选:特仑苏纯牛奶2.7元/盒发车(商超6元)

两元人民币图片 两元人民币_每日速讯

今日美股市场行情快报(2023年6月5日)

《计算机网络》——华为基本配置命令_焦点热讯

700多心理测试性格测试大全ACCESS数据库 每日讯息

天天热资讯!帮你梳理了一份前端知识架构图

天天速读:亚马逊网络服务教程_编程入门自学教程_菜鸟教程-免费教程分享

今日热闻!hw面试常见中间件漏洞

特斯拉超充站充电 吃完饭下来“罚款”500!车主:这辈子不再买它了 当前热闻

XGP故障、键鼠无响应:Win11更新又出大量问题|天天快看

全球观点:明天出坞!我国首艘国产大型邮轮“爱达·魔都”内部实拍:可容纳5246人

经典重现!梁家辉把玩马自达CX-50行也车模 网友:别坐、堵车 世界百事通

微动态丨大众全新入门级轿车 朗逸XR即将上市!代替桑塔纳 或售5-7万

要闻速递:港股开盘 | 恒指高开0.34% 科网股表现分化 机构:三大因素支撑港股反弹

世界视点!SpringBoot打包成WAR包的时候把第三方jar包打到LIB文件夹下和把第三方jar包打入到SpringBoot jar包中

首批网红明星AI克隆人上线,花30元可与知名网红视频聊天

行驶中方向盘可能会掉 特斯拉召回部分Model Y!中国车主不受影响 前沿资讯

环球百事通!微软Office 365 AI定价曝光:年费10万美元 已有100家客户

反向带货 长安汽车4S直播间上演“尾翼夹手”:女销售惨叫不已_全球热文

宝骏悦也后装增程版方案出炉:4L油箱多跑80km、售价2000元-全球热推荐

人才!顾客打印身份证后怕泄露隐私 顺手把老板电脑系统重装了

6月5日最新秘鲁鱼粉外盘价格

AVX512惹麻烦 英特尔大小核给AMD上了一课 Zen5锐龙吸取教训

苹果首款头显明天发布!郭明錤泼冷水:类ChatGPT更重要

日本车的又一个基本盘:要崩了 天天看热讯

世界快报:全球首架载人“飞碟”在深圳起飞:水陆两栖起降 最快50km/h

变阵!热火总决赛G2首发:乐福顶替马丁出任首发!

每日动态!Blazor 使用代码直接在新窗口打开链接, 获取窗口宽度, 使用cookie

如何在Linux上启用 Nginx 的 HTTP/2 协议支持-聚看点

【读财报】公募REITs透视:建信、中金基金年内回撤逾15% 华安、博时基金等启动首批扩募

关于开通招商智星稳健配置混合型基金中基金(FOF-LOF)A类份额跨系统转托管业务的公告_环球时讯

国产大飞机C919商业首飞圆满成功:国产化率60% 年产能将达50架|全球通讯

顺利交付!神十五航天员带回了20多公斤实验样品:竟有一种虫子_最资讯

10年前的显卡都流畅 《暗黑4》被曝移植苹果平台:iPad也能玩_环球快报

还说4G成熟够用?中国网速全面秒美国 邬贺铨:总用户数激增 向5.5G发展

全球快资讯:读改变未来的九大算法笔记04_公钥加密

孩子严重烫伤9天才被家长送医 听偏方治疗:据给孩子手术 网友无语

SSD硬盘暴跌 你仍然需要一块机械硬盘:存数据更安全-世界短讯

实时:河南一村庄修路挖出大石龟?官方通报:上世纪修路埋的

柘汪中学:青少年科技教育成果丰硕

【DNS】域名服务 Bind实现

ASP.NET Core MVC 从入门到精通之自动映射(二)-独家焦点

实时焦点:不是我说 iPhone拍照怎么就像富士相机了?

世界热推荐:NVIDIA黄仁勋:我们从未忘记游戏玩家!

号称使用AI独立管理基金的私募改口了:AI并非主要用于交易 热点

人间第一情歌词完整版原唱_人间第一情歌词|全球看热讯

实时焦点:今金贷最新退付消息:2023年兑付最新声明退付通知(退付进展告知)

曝华为版ChatGPT将下月发布 名为“盘古Chat”-当前独家

每日热议!128秒回顾中国载人航天高燃时刻:杨利伟 居功至伟!