最新要闻

- 天天快讯:缩减5G基站招标规模 大幅减少5G投资?中移动回应:外界误读

- 天天关注:又一个满血14GB/s!PCIe 5.0 SSD用上巨型风扇 太过分了

- 抗原检测盒优惠了!50人份到手19.9元

- 白玉兰奖入围名单揭晓 网友:正午阳光赢麻了

- 抛弃反人类半幅方向盘 特斯拉Cybertruck电动皮卡实车图:今年必交付 当前热门

- 武磊:客场也能感受球迷的热爱和支持 超越一切对立_天天最资讯

- 手机性价比被吐槽 HTC对元宇宙是真爱:不认同降温说、非常乐观

- Redmi性能王者!K60 Ultra工业设计图曝光 前沿资讯

- 《王者荣耀》体验服爆料:中单法师狂喜 斩杀史诗级优化

- 全球热消息:导航出错驶入紧急停车带 驶出时被撞 科普:紧急停车带该怎么用

- 全球视点!内存连续三个季度暴跌 三大厂疯狂减产!想涨价?没门儿

- 海贼王:“七武海”原型揭秘!居然来自30年前游戏《浪漫沙加2》

- 举报比亚迪排放不达标!长城汽车晒业绩:1-4月同比增长99.1% 买它还是比亚迪?_每日精选

- 焦点播报:16核R9 7945HX加持!联想公布新版拯救者R9000P参数

- 世界百事通!法拉第未来官宣:FF 91第一阶段交付5月31日开始 车主先培训

- 环球视讯!促进跨区域产业链、供应链、创新链、资金链、人才链深度融合,一大批长三角G60科创走廊跨区域合作重点项目签约

手机

iphone11大小尺寸是多少?苹果iPhone11和iPhone13的区别是什么?

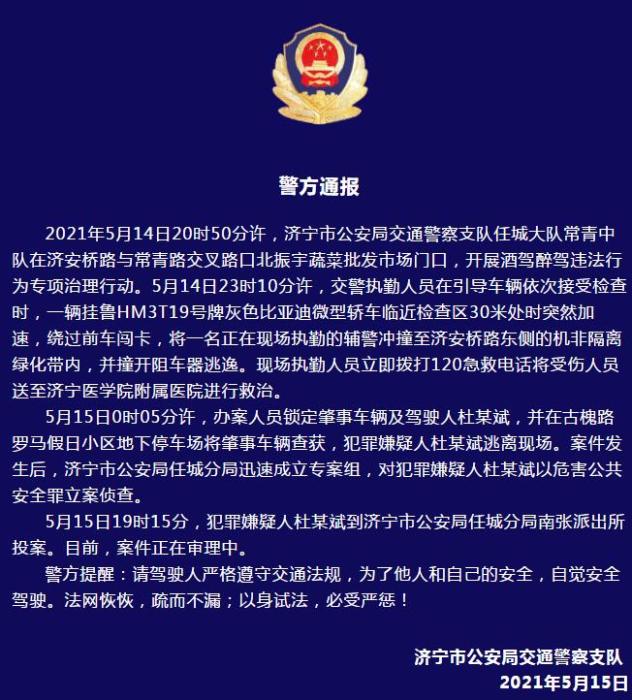

警方通报辅警执法直播中被撞飞:犯罪嫌疑人已投案

- iphone11大小尺寸是多少?苹果iPhone11和iPhone13的区别是什么?

- 警方通报辅警执法直播中被撞飞:犯罪嫌疑人已投案

- 男子被关545天申国赔:获赔18万多 驳回精神抚慰金

- 3天内26名本土感染者,辽宁确诊人数已超安徽

- 广西柳州一男子因纠纷杀害三人后自首

- 洱海坠机4名机组人员被批准为烈士 数千干部群众悼念

家电

全网最全Kubernetes(k8s)知识点,看着一篇就够了-当前热讯

一、引言

Kubernetes是谷歌强力推出的一款开源的容器编排技术,他的目标是让部署容器化的应用更简单高效,Kubernetes 提供了应用部署,规划,更新,维护的一系列机制,很多大公司都在使用。Kubernetes有叫k8s(下面我就简称k8s)。下面我们就进入k8s的世界吧!

二、k8s概述和特性

1、几点概述

- k8s是谷歌在2014年推出的容器化集群管理系统

- 使用k8s进行容器化应用部署

- 使用k8s利于应用扩展

- k8s目标是让部署容器化应用更加简洁高效

2、k8s特性

(1)自动装箱

基于容器对应用运行环境的资源配置要求

【资料图】

【资料图】

(2)自我修复

当容器失败时,会对容器进行重启当所部署的Node节点有问题时,会对容器进行重新部署和重新调度当容器未通过监控检查时,会关闭此容器直到容器正常运行时,才会对外提供服务

(3)水平扩展

当我们有大量的请求来临时,我们可以增加副本数量,从而达到水平扩展的效果

(4)服务发现

用户不需使用额外的服务发现机制,就能够基于Kubernetes 自身能力实现服务发现和负载均衡

(5)滚动更新

可以根据应用的变化,对应用容器运行的应用,进行一次性或批量式更新,添加应用的时候,不是加进去就马上可以进行使用,而是需要判断这个添加进去的应用是否能够正常使用

(6)版本回退

可以根据应用部署情况,对应用容器运行的应用,进行历史版本即时回退。类似回滚。

(7)密钥和配置管理

在不需要重新构建镜像的情况下,可以部署和更新密钥和应用配置,类似热部署。

(8)存储编排

自动实现存储系统挂载及应用,特别对有状态应用实现数据持久化非常重要存储系统可以来自于本地目录、网络存储(NFS、Gluster、Ceph 等)、公共云存储服务

(9)批处理

提供一次性任务,定时任务;满足批量数据处理和分析的场景

三、k8s集群架构的组件

k8s集群架构主要是由Master node(主控节点)和work node(工作节点)组成。如图

1、Master node(主控节点)

- apiserver集群统一入口,以restful方式,交给etcd存储。

- scheduler节点调度,选择node节点应用部署*controller-manager处理集群中常规后台任务,一个资源对应一个控制器

- etcd用于保存集群里面的各种数据,比如说一些状态数据,pod数据,service数据

2、work node(工作节点)

- kubeeletmaster 派到node节点的代表,管理本机的容器

- kube-proxy提供网络代理,负载均衡等操作

四、k8s核心概念

1、Pod

- 最小部署单元

- 一组容器的集合

- 共享网络

- 生命周期短

2、controller

- 确保预期的pod副本数量

- 无状态应用部署

- 有状态应用部署

- 确保所有的mode运行用一个pod

- 一次性任务和定时任务

3、service

- 定义一组pod的访问规则

五、kubernetes集群搭建介绍

1、部署 Kubernetes 集群方式

- kubeadmKubeadm 是一个K8s部署工具,提供kubeadm init 和 kubeadm join,用于快速部署Kubernetes集群。官方地址:https://kubernetes.io/docs/reference/setup-tools/kubeadm/kubeadm/

- 二进制包从github下载发行版的二进制包,手动部署每个组件,组成Kubernetes集群。Kubeadm 降低部署门槛,但屏蔽了很多细节,遇到问题很难排查。如果想更容易可控,推荐使用二进制包部署 Kubernetes 集群,虽然手动部署麻烦点,期间可以学习很多工作原理,也利于后期维护。

2、搭建kubernetes集群规划

单master集群

多master集群

六、kubeadm 快速部署k8s集群

1、环境准备

1.1、硬件环境

| 角色 | IP |

|---|---|

| k8s_master | 192.168.10.100 |

| k8s_node1 | 192.168.10.102 |

| k8s_node2 | 192.168.10.103 |

| k8s_node4 | 192.168.10.104 |

1.2、环境初始化

# 关闭防火墙systemctl stop firewalldsystemctl disable firewalld# 关闭selinuxsed -i "s/enforcing/disabled/" /etc/selinux/config # 永久setenforce 0 # 临时# 关闭swapswapoff -a # 临时sed -ri "s/.*swap.*/#&/" /etc/fstab # 永久# 根据规划设置主机名hostnamectl set-hostname # 在master添加hostscat >> /etc/hosts << EOF192.168.10.100 k8smaster192.168.10.102 k8snode1192.168.10.103 k8snode2192.168.10.104 k8snode4EOF# 将桥接的IPv4流量传递到iptables的链cat > /etc/sysctl.d/k8s.conf << EOFnet.bridge.bridge-nf-call-ip6tables = 1net.bridge.bridge-nf-call-iptables = 1EOFsysctl --system # 生效# 时间同步yum install ntpdate -yntpdate time.windows.com 2、所有节点安装Docker/kubeadm/kubelet

Kubernetes默认CRI(容器运行时)为Docker,因此先安装Docker。

2.1、安装Docker

wget https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo -O /etc/yum.repos.d/docker-ce.repo #wget下载dockeryum -y install docker-ce-18.06.1.ce-3.el7 # 安装dockersystemctl enable docker && systemctl start docker #设置自启动docker --version #检查是否安装成功#设置docker的镜像$ cat > /etc/docker/daemon.json << EOF{ "registry-mirrors": ["https://b9pmyelo.mirror.aliyuncs.com"]}EOF2.2、添加阿里云YUM软件源

$ cat > /etc/yum.repos.d/kubernetes.repo << EOF[kubernetes]name=Kubernetesbaseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64enabled=1gpgcheck=0repo_gpgcheck=0gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpgEOF2.3、安装kubeadm,kubelet和kubectl

由于版本更新频繁,这里指定版本号部署:

$ yum install -y kubelet-1.18.0 kubeadm-1.18.0 kubectl-1.18.0$ systemctl enable kubelet3、部署Kubernetes Master

在192.168.10.100(Master)执行。

$ kubeadm init \ --apiserver-advertise-address=192.168.10.100 \ # 设置apiserver的ip,也就是master的本机ip --image-repository registry.aliyuncs.com/google_containers \ --kubernetes-version v1.18.0 \ --service-cidr=10.96.0.0/12 \ --pod-network-cidr=10.244.0.0/16如果你的swap没关闭,他就会报错,报错截图如下:

# 安装成功的信息如下[root@hadoop100 yum.repos.d]# swapoff -a[root@hadoop100 yum.repos.d]# sed -ri "s/.*swap.*/#&/" /etc/fstab[root@hadoop100 yum.repos.d]# kubeadm init --apiserver-advertise-address=192.168.10.100 --image-repository registry.aliyuncs.com/google_containers --kubernetes-version v1.18.0 --service-cidr=10.96.0.0/12 --pod-network-cidr=10.244.0.0/16W0526 14:35:38.129635 19861 configset.go:202] WARNING: kubeadm cannot validate component configs for API groups [kubelet.config.k8s.io kubeproxy.config.k8s.io][init] Using Kubernetes version: v1.18.0[preflight] Running pre-flight checks[WARNING IsDockerSystemdCheck]: detected "cgroupfs" as the Docker cgroup driver. The recommended driver is "systemd". Please follow the guide at https://kubernetes.io/docs/setup/cri/[WARNING SystemVerification]: this Docker version is not on the list of validated versions: 20.10.16. Latest validated version: 19.03[preflight] Pulling images required for setting up a Kubernetes cluster[preflight] This might take a minute or two, depending on the speed of your internet connection[preflight] You can also perform this action in beforehand using "kubeadm config images pull"[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"[kubelet-start] Starting the kubelet[certs] Using certificateDir folder "/etc/kubernetes/pki"[certs] Generating "ca" certificate and key[certs] Generating "apiserver" certificate and key[certs] apiserver serving cert is signed for DNS names [k8smaster kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local] and IPs [10.96.0.1 192.168.10.100][certs] Generating "apiserver-kubelet-client" certificate and key[certs] Generating "front-proxy-ca" certificate and key[certs] Generating "front-proxy-client" certificate and key[certs] Generating "etcd/ca" certificate and key[certs] Generating "etcd/server" certificate and key[certs] etcd/server serving cert is signed for DNS names [k8smaster localhost] and IPs [192.168.10.100 127.0.0.1 ::1][certs] Generating "etcd/peer" certificate and key[certs] etcd/peer serving cert is signed for DNS names [k8smaster localhost] and IPs [192.168.10.100 127.0.0.1 ::1][certs] Generating "etcd/healthcheck-client" certificate and key[certs] Generating "apiserver-etcd-client" certificate and key[certs] Generating "sa" key and public key[kubeconfig] Using kubeconfig folder "/etc/kubernetes"[kubeconfig] Writing "admin.conf" kubeconfig file[kubeconfig] Writing "kubelet.conf" kubeconfig file[kubeconfig] Writing "controller-manager.conf" kubeconfig file[kubeconfig] Writing "scheduler.conf" kubeconfig file[control-plane] Using manifest folder "/etc/kubernetes/manifests"[control-plane] Creating static Pod manifest for "kube-apiserver"[control-plane] Creating static Pod manifest for "kube-controller-manager"W0526 14:36:47.126661 19861 manifests.go:225] the default kube-apiserver authorization-mode is "Node,RBAC"; using "Node,RBAC"[control-plane] Creating static Pod manifest for "kube-scheduler"W0526 14:36:47.128024 19861 manifests.go:225] the default kube-apiserver authorization-mode is "Node,RBAC"; using "Node,RBAC"[etcd] Creating static Pod manifest for local etcd in "/etc/kubernetes/manifests"[wait-control-plane] Waiting for the kubelet to boot up the control plane as static Pods from directory "/etc/kubernetes/manifests". This can take up to 4m0s[apiclient] All control plane components are healthy after 25.002851 seconds[upload-config] Storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace[kubelet] Creating a ConfigMap "kubelet-config-1.18" in namespace kube-system with the configuration for the kubelets in the cluster[upload-certs] Skipping phase. Please see --upload-certs[mark-control-plane] Marking the node k8smaster as control-plane by adding the label "node-role.kubernetes.io/master="""[mark-control-plane] Marking the node k8smaster as control-plane by adding the taints [node-role.kubernetes.io/master:NoSchedule][bootstrap-token] Using token: yns0bk.uts2jsm1unmvcbdp[bootstrap-token] Configuring bootstrap tokens, cluster-info ConfigMap, RBAC Roles[bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to get nodes[bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials[bootstrap-token] configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token[bootstrap-token] configured RBAC rules to allow certificate rotation for all node client certificates in the cluster[bootstrap-token] Creating the "cluster-info" ConfigMap in the "kube-public" namespace[kubelet-finalize] Updating "/etc/kubernetes/kubelet.conf" to point to a rotatable kubelet client certificate and key[addons] Applied essential addon: CoreDNS[addons] Applied essential addon: kube-proxyYour Kubernetes control-plane has initialized successfully!To start using your cluster, you need to run the following as a regular user: mkdir -p $HOME/.kube sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config sudo chown $(id -u):$(id -g) $HOME/.kube/configYou should now deploy a pod network to the cluster.Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at: https://kubernetes.io/docs/concepts/cluster-administration/addons/Then you can join any number of worker nodes by running the following on each as root:kubeadm join 192.168.10.100:6443 --token yns0bk.uts2jsm1unmvcbdp \ --discovery-token-ca-cert-hash sha256:4d76fcbd7aa9bc0aa9165010c0203a18864a417ee6c9ad8e9add835832475743 根据他出现的信息,我们可以使用kubectl工具,如图:

mkdir -p $HOME/.kubesudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/configsudo chown $(id -u):$(id -g) $HOME/.kube/config4、加入Kubernetes Node

根据master node的提示信息,我们需要在每个node节(192.168.10.102、192.168.10.103、192.168.10.104)点中执行如下内容:

kubeadm join 192.168.10.100:6443 --token yns0bk.uts2jsm1unmvcbdp \ --discovery-token-ca-cert-hash sha256:4d76fcbd7aa9bc0aa9165010c0203a18864a417ee6c9ad8e9add835832475743 加入node节点时,会创建一个token,默认有效期为24小时,当过期之后,该token就不可用了,这时候就需要重新创建token,操作如下:

kubeadm d5、部署CNI网络插件

所有的work node加入完成之后,这时候我们执行一下‘kubectl get nodes’命令,可以发现节点状态都是NotReady,这是因为该k8s集群还处于离线状态,我们需要给他设置一下网络。这里有个小坑!直接执行下面镜像地址是无法访问的

kubectl apply -f https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml报错信息如下:我刚开始以为网络问题,所以多试了几遍,还是不行,感谢这位大哥的笔记k8s构建Flannel网络插件失败已经成功解决。提示:这个需要在所以节点上执行,并且执行速度有点慢可以执行以下命令:

kubectl get pods -n kube-system #查看所有的pods的状态kubectl get nodes #查看所有的节点 观察是否变成Ready状态6、测试kubernetes集群

在Kubernetes集群中创建一个pod,验证是否正常运行:

$ kubectl create deployment nginx --image=nginx # 拉取nginx镜像并部署$ kubectl expose deployment nginx --port=80 --type=NodePort # 暴露端口80$ kubectl get pod,svc #查看pod和port(端口)访问地址:http://NodeIP:Porthttp://192.168.10.103:30636

关键词:

-

-

-

-

全网最全Kubernetes(k8s)知识点,看着一篇就够了-当前热讯

【财经分析】推动“1到100”的跨越式发展 长三角崛起生物医药产业“新基建”高地

天天快讯:缩减5G基站招标规模 大幅减少5G投资?中移动回应:外界误读

天天关注:又一个满血14GB/s!PCIe 5.0 SSD用上巨型风扇 太过分了

抗原检测盒优惠了!50人份到手19.9元

白玉兰奖入围名单揭晓 网友:正午阳光赢麻了

抛弃反人类半幅方向盘 特斯拉Cybertruck电动皮卡实车图:今年必交付 当前热门

环球要闻:渗透测试之Payload

7个工程应用中数据库性能优化经验分享

焦点热议:Prometheus笔记-告警规则配置

北京市2023年新增地方政府债务限额1117亿元

收评:沪指午后反弹涨0.35% AI赛道股发力 新能源行业低迷_天天信息

武磊:客场也能感受球迷的热爱和支持 超越一切对立_天天最资讯

手机性价比被吐槽 HTC对元宇宙是真爱:不认同降温说、非常乐观

Redmi性能王者!K60 Ultra工业设计图曝光 前沿资讯

《王者荣耀》体验服爆料:中单法师狂喜 斩杀史诗级优化

全球热消息:导航出错驶入紧急停车带 驶出时被撞 科普:紧急停车带该怎么用

全球视点!内存连续三个季度暴跌 三大厂疯狂减产!想涨价?没门儿

快报:做数据分析的常用方法有哪些?

尚硅谷Hadoop的WordCount案例实操练习出现的bug-环球新视野

JS 里如何实现异步?

如何在上架App之前设置证书并上传应用|全球今头条

海贼王:“七武海”原型揭秘!居然来自30年前游戏《浪漫沙加2》

举报比亚迪排放不达标!长城汽车晒业绩:1-4月同比增长99.1% 买它还是比亚迪?_每日精选

焦点播报:16核R9 7945HX加持!联想公布新版拯救者R9000P参数

世界百事通!法拉第未来官宣:FF 91第一阶段交付5月31日开始 车主先培训

环球视讯!促进跨区域产业链、供应链、创新链、资金链、人才链深度融合,一大批长三角G60科创走廊跨区域合作重点项目签约

广州市花都区秀全中学:720分以上学生可以考虑秀中清北班-世界微头条

易基因:MeRIP-seq等揭示m6A reader YTHDF1在结直肠癌PD-1免疫治疗中的作用|Gut 全球实时

手把手教你在昇腾平台上搭建PyTorch训练环境_天天新要闻

今日上映!《小美人鱼》豆瓣评论:难以接受黑人鱼、强凑CP、毁童年-焦点热门

即时:宝德暴芯x86处理器现身GeekBench 5:坐实就是Intel i3-10105

知名演员罗京民去世 曾饰演许三多的爹:张译等人发文悼念 焦点速递

ST浩源:截至2023年5月20日,公司股东14658户,谢谢对公司的关注!|天天最新

每个.NET开发都应该知道的10个.NET库

关于AWS中VPC下的IGW-internet gateway的创建与说明

升级天玑8200处理器:vivo S17 Pro现身Geekbench

曝小米13 Ultra欧洲售价超1.1万元:比iPhone 14 Pro、华为P60 Pro都贵_世界今日讯

全球球精选!破首发仅7499元!华硕天选4游戏本配锐龙9与RTX4060:高性价比真香

热点!散了吧!特斯拉车顶维权女车主败诉:刹车失灵观点站不住脚 没任何证据证明

《变形金刚7:超能勇士崛起》超燃特辑出炉:保时捷964街头飞车_热资讯

Netty实战(三)

上市公司实控人离婚140亿归女方,盘点彤程新材投资版图

千元神机!荣耀X50首发骁龙6 Gen1:一亿像素加持

育碧再次背离玩家!《刺客信条:幻景》PC端Steam独不占_世界百事通

一眼假的诈骗短信是骗子智商不够吗?官方:这是极高效率筛选受害者

京东618大促攻略:iPhone 14 Pro系列直降1800元 多会员年卡探底|环球视点

百度文心大模型3.5版要来了!李彦宏:大模型将改变世界 环球热门

屹通新材:5月25日融资买入167.73万元,融资融券余额3877.74万元

统信UOS系统开发笔记(一):国产统信UOS系统搭建开发环境之虚拟机安装

揭秘百度IM消息中台的全量用户消息推送技术改造实践

Python工具箱系列(三十三)

海内外直播源码加密技术保障您的隐私安全 焦点快播

Windows下使用docker部署.Net Core 全球今热点

一天斩获3个冠军!全国花游冠军赛北京队“开门红”-快看点

DXO拍照全球第一!华为P60 Pro新增12GB+256GB版本 6488元

单价6.5亿 想坐吗?国产大飞机C919商业首航来了:5月28日、上海至北京_微头条

神操作 小汽车撞倒闯红灯三轮车后居然直接走了 无责变有责

糟糕!下一轮国内油价调整“由跌转涨”:当前上调75元/吨 今日快讯

牌面!福特CEO称特斯拉不是最大竞争对手 比亚迪才是 环球新消息

环球视讯!UE/虚幻 蓝图实现通过http获取数据(以高德地图API为例)&Json格式数据的读取

标准化考场时间同步系统(网络时钟系统)规划建设应用 每日快播

Java设计模式-策略模式|环球快资讯

焦点要闻:美联储加息预期升温 美元兑日元汇率再度站上140

计算机时代变了 NVIDIA黄仁勋:CPU用得越来越少 GPU才是关键|世界实时

【世界快播报】自研火箭发射8颗卫星 韩国表态已成航天强国:仅中美等7国做到

当前快报:跟比亚迪海豚争场子 五菱云朵8月上市:10万级大五座纯电新宠

焦点观察:优派推出新款27寸4K Mini LED显示器:支持96W反向充 2999元

山东一高速现天价救援费:20公里被收11000元 结局大快人心 观天下

世界热点!Fastjson 很快,但不适合我....

每日观点:干瘦肉炒什么配菜好吃?

一季度垃圾短信投诉5万件 官方揭秘:主要是这10家公司发的

18888元求票 五月天黄牛票涨回去了?29名黄牛被查处|当前简讯

你吃过没?商家回应淄博烧烤降温:再不降温我们也受不了|新消息

世界消息!成都一轿车冲进店铺撞坏16个骨灰盒 现场损坏严重:网友称是大众

遥遥领先!华为分布式存储斩获IO500榜全球第一:Intel看不见尾灯

裘皮是什么皮_裘皮的介绍 天天资讯

触达债务上限日期或在两周后 美债收益率延续上行_环球微头条

国际金融市场早知道:5月26日|焦点热讯

刘强东14年为宿迁投入超200亿!宿迁第一高楼启用 未来3年在当地新招上万人

世界热点评!《英雄联盟手游》今日更新:无限火力升级归来 英雄可达25级

刷新世界纪录!中国吊起142米、3068吨“巨无霸”大塔

选择商品的发布类目方式有_选择一款正确发布的商品

今日要闻!学系统集成项目管理工程师(中项)系列27_10大管理47过程、输入输出工具和技术(2版教材)

今天国内上映!《小美人鱼》来了 口碑解禁观众爱看小黑美人鱼

新概念英语语法词汇练习第一册_关于新概念英语语法词汇练习第一册简介 热点在线

调优圣经:零基础精通Jmeter分布式压测,10Wqps+超高并发 世界快资讯

直接用中文写提示词的Stable Diffusion扩展:sd-prompt-translator发布

上海高架斗气车主或涉嫌什么罪名?专家解读多种可能

天天热门:史上最大涨幅!康宁:所有玻璃基板提价20%

天天头条:让友商恨得牙痒痒的比亚迪宋Pro DM-i冠军版:到底怎么样?

视焦点讯!马斯克吹了三年的锂电之光4680:竟然还不如普通电池!

袋鼠贵为澳大利亚国宝 却每年被合法猎杀几百万只:为啥? 全球即时看

当前快播:宠物经济板块5月25日跌0.66%,狮头股份领跌,主力资金净流出7407.47万元

鼻中隔糜烂用什么药最好_鼻中隔糜烂

当前消息!新仙剑奇侠传之挥剑问情林月如怎么获得 林月如获取方法介绍

药智网数据库 高校(药智网数据库)

沪指险守3200点 外资连续3天撤离!热门题材持续活跃

头条焦点:适合女孩子玩的单机小游戏(有哪些适合女生玩的单机小游戏?)

广州47中好不好_广州市47中高中部的重点班有哪些