最新要闻

- 艾迪药业艾邦德 复邦德 上市发布会盛大召开 快播报

- 仅重998g!LG推出Gram SuperSlim笔电:10.9mm纤薄机身

- 锐龙R9-7945HX游戏本实测:性能恐怖 渲染能力媲美桌面版-环球快看

- 国产芯片新突破!龙芯3A5000成功应用于3D打印|焦点热门

- 最资讯丨画二次元画首先学什么,南京二次元画哪里可以学

- 海大集团接待AllianzGlobal等多家机构调研|全球时快讯

- 谷歌创始人大量抛售特斯拉股票:曾被曝马斯克绿了他 今日讯

- 光刻机订单占了30% ASML喊话:绝对不能失去中国市场_天天头条

- 今日快讯:小米盒子怎么看电视直播_小米盒子看电视直播的方法

- 男子聊天界面投屏广场成大型社死现场:全网都知道他不回老婆微信了 重点聚焦

- 每日精选:首例涉“虚拟数字人”侵权案宣判:被告判消除影响并赔12万

- 每日动态!89英寸三星MICRO LED电视全球首秀:RGB无机自发光、支持音画追踪

- 研究所预测:2070年日本总人口将降至8700万-每日时讯

- 华为88W充电器A/C口二选一引争议 华为李小龙:对用户最友好的设计_快资讯

- 世界快报:小小善举 温暖一座城!搀扶残障人士过马路的暖心司机找到了

- 韩媒:拜登向尹锡悦推荐“零度可乐”,韩网友嘲讽“给你的也只有零”_全球热闻

手机

iphone11大小尺寸是多少?苹果iPhone11和iPhone13的区别是什么?

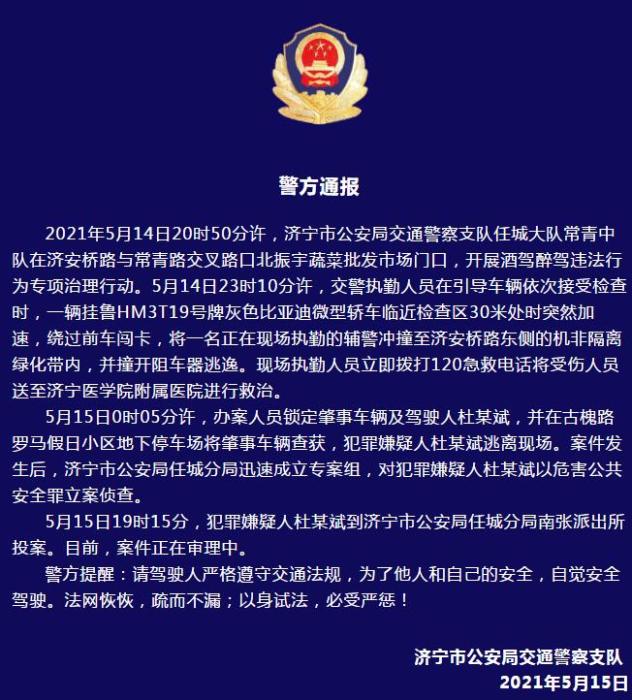

警方通报辅警执法直播中被撞飞:犯罪嫌疑人已投案

- iphone11大小尺寸是多少?苹果iPhone11和iPhone13的区别是什么?

- 警方通报辅警执法直播中被撞飞:犯罪嫌疑人已投案

- 男子被关545天申国赔:获赔18万多 驳回精神抚慰金

- 3天内26名本土感染者,辽宁确诊人数已超安徽

- 广西柳州一男子因纠纷杀害三人后自首

- 洱海坠机4名机组人员被批准为烈士 数千干部群众悼念

家电

CutMix&Mixup详解与代码实战

摘要:本文将通过实践案例带大家掌握CutMix&Mixup。

本文分享自华为云社区《CutMix&Mixup详解与代码实战》,作者:李长安。

(资料图片仅供参考)

(资料图片仅供参考)

引言

最近在回顾之前学到的知识,看到了数据增强部分,对于CutMix以及Mixup这两种数据增强方式发现理解不是很到位,所以这里写了一个项目再去好好看这两种数据增强方式。最开始在目标检测中,未对数据的标签部分进行思考,对于图像的处理,大家是可以很好理解的,因为非常直观,但是通过阅读相关论文,查看一些相关的资料发现一些新的有趣的东西。接下来为大家讲解一下这两种数据增强方式。下图从左至右分别为原图、mixup、cutout、cutmix。

Mixup离线实现

Mixup相信大家有了很多了解,并且大家也能发现网络上有很多大神的解答,所以我这里就不在进行详细讲解了。

- Mixup核心思想:两张图片采用比例混合,label也需要按照比例混合

- 论文关键点

- 考虑过三个或者三个以上的标签做混合,但是效果几乎和两个一样,而且增加了mixup过程的时间。

- 当前的mixup使用了一个单一的loader获取minibatch,对其随机打乱后,mixup对同一个minibatch内的数据做混合。这样的策略和在整个数据集随机打乱效果是一样的,而且还减少了IO的开销。

- 在同种标签的数据中使用mixup不会造成结果的显著增强

下面的Cell为Mixup的图像效果展示,具体实现请参考下面的在线实现。

%matplotlib inlineimport matplotlib.pyplot as pltimport matplotlib.image as Imageimport numpy as npim1 = Image.imread("work/data/10img11.jpg")im1 = im1/255.im2 = Image.imread("work/data/14img01.jpg")im2 = im2/255.for i in range(1,10): lam= i*0.1 im_mixup = (im1*lam+im2*(1-lam)) plt.subplot(3,3,i) plt.imshow(im_mixup)plt.show()CutMix离线实现

简单来说cutmix相当于cutout+mixup的结合,可以应用于各种任务中。

mixup相当于是全图融合,cutout仅仅对图片进行增强,不改变label,而cutmix则是采用了cutout的局部融合思想,并且采用了mixup的混合label策略,看起来比较make sense。

- cutmix和mixup的区别是:其混合位置是采用hard 0-1掩码,而不是soft操作,相当于新合成的两张图是来自两张图片的hard结合,而不是Mixup的线性组合。但是其label还是和mixup一样是线性组合。

下面的代码为了消除随机性,对cut的位置进行了固定,主要是为了展示效果。代码更改位置如下所示,注释的部分为大家通用的实现。

# bbx1 = np.clip(cx - cut_w // 2, 0, W) # bby1 = np.clip(cy - cut_h // 2, 0, H) # bbx2 = np.clip(cx + cut_w // 2, 0, W) # bby2 = np.clip(cy + cut_h // 2, 0, H) bbx1 = 10 bby1 = 600 bbx2 = 10 bby2 = 600%matplotlib inlineimport globimport numpy as npimport matplotlib.pyplot as pltplt.rcParams["figure.figsize"] = [10,10]import cv2# Path to datadata_folder = f"/home/aistudio/work/data/"# Read filenames in the data folderfilenames = glob.glob(f"{data_folder}*.jpg")# Read first 10 filenamesimage_paths = filenames[:4]image_batch = []image_batch_labels = []n_images = 4print(image_paths)for i in range(4): image = cv2.cvtColor(cv2.imread(image_paths[i]), cv2.COLOR_BGR2RGB) image_batch.append(image)image_batch_labels=np.array([[1,0,0,0],[0,1,0,0],[0,0,1,0],[0,0,0,1]])def rand_bbox(size, lamb): W = size[0] H = size[1] cut_rat = np.sqrt(1. - lamb) cut_w = np.int(W * cut_rat) cut_h = np.int(H * cut_rat) # uniform cx = np.random.randint(W) cy = np.random.randint(H) # bbx1 = np.clip(cx - cut_w // 2, 0, W) # bby1 = np.clip(cy - cut_h // 2, 0, H) # bbx2 = np.clip(cx + cut_w // 2, 0, W) # bby2 = np.clip(cy + cut_h // 2, 0, H) bbx1 = 10 bby1 = 600 bbx2 = 10 bby2 = 600 return bbx1, bby1, bbx2, bby2image = cv2.cvtColor(cv2.imread(image_paths[0]), cv2.COLOR_BGR2RGB)# Crop a random bounding boxlamb = 0.3size = image.shapeprint("size",size)def generate_cutmix_image(image_batch, image_batch_labels, beta): c=[1,0,3,2] # generate mixed sample lam = np.random.beta(beta, beta) rand_index = np.random.permutation(len(image_batch)) print(f"iamhere{rand_index}") target_a = image_batch_labels target_b = np.array(image_batch_labels)[c] print("img.shape",image_batch[0].shape) bbx1, bby1, bbx2, bby2 = rand_bbox(image_batch[0].shape, lam) print("bbx1",bbx1) print("bby1",bby1) print("bbx2",bbx2) print("bby2",bby2) image_batch_updated = image_batch.copy() image_batch_updated=np.array(image_batch_updated) image_batch=np.array(image_batch) image_batch_updated[:, bbx1:bby1, bbx2:bby2, :] = image_batch[[c], bbx1:bby1, bbx2:bby2, :] # adjust lambda to exactly match pixel ratio lam = 1 - ((bbx2 - bbx1) * (bby2 - bby1) / (image_batch.shape[1] * image_batch.shape[2])) print(f"lam is {lam}") label = target_a * lam + target_b * (1. - lam) return image_batch_updated, label# Generate CutMix imageinput_image = image_batch[0]image_batch_updated, image_batch_labels_updated = generate_cutmix_image(image_batch, image_batch_labels, 1.0)# Show original imagesprint("Original Images")for i in range(2): for j in range(2): plt.subplot(2,2,2*i+j+1) plt.imshow(image_batch[2*i+j])plt.show()# Show CutMix imagesprint("CutMix Images")for i in range(2): for j in range(2): plt.subplot(2,2,2*i+j+1) plt.imshow(image_batch_updated[2*i+j])plt.show()# Print labelsprint("Original labels:")print(image_batch_labels)print("Updated labels")print(image_batch_labels_updated)["/home/aistudio/work/data/11img01.jpg", "/home/aistudio/work/data/10img11.jpg", "/home/aistudio/work/data/14img01.jpg", "/home/aistudio/work/data/12img11.jpg"]size (2016, 1512, 3)iamhere[2 1 0 3]img.shape (2016, 1512, 3)bbx1 10bby1 600bbx2 10bby2 600lam is 1.0Original ImagesCutMix Images

Original labels:[[1 0 0 0] [0 1 0 0] [0 0 1 0] [0 0 0 1]]Updated labels[[1. 0. 0. 0.] [0. 1. 0. 0.] [0. 0. 1. 0.] [0. 0. 0. 1.]]

Mixup&CutMix在线实现

大家需要注意的是,通常我们在实际的使用中都是使用在线的方式进行数据增强,也就是本小节所讲的方法,所以大家在实际的使用中可以使用下面的代码。mixup实现原理同cutmix相差不多,大家可以根据我下面的的代码更改一下即可。

!cd "data/data97595" && unzip -q nongzuowu.zipfrom paddle.io import Datasetimport cv2import paddleimport random# 导入所需要的库from sklearn.utils import shuffleimport osimport pandas as pdimport numpy as npfrom PIL import Imageimport paddleimport paddle.nn as nnfrom paddle.io import Datasetimport paddle.vision.transforms as Timport paddle.nn.functional as Ffrom paddle.metric import Accuracyimport warningswarnings.filterwarnings("ignore")# 读取数据train_images = pd.read_csv("data/data97595/nongzuowu/train.csv")# 划分训练集和校验集all_size = len(train_images)# print(all_size)train_size = int(all_size * 0.8)train_df = train_images[:train_size]val_df = train_images[train_size:]# CutMix 的切块功能def rand_bbox(size, lam): if len(size) == 4: W = size[2] H = size[3] elif len(size) == 3: W = size[0] H = size[1] else: raise Exception cut_rat = np.sqrt(1. - lam) cut_w = np.int(W * cut_rat) cut_h = np.int(H * cut_rat) # uniform cx = np.random.randint(W) cy = np.random.randint(H) bbx1 = np.clip(cx - cut_w // 2, 0, W) bby1 = np.clip(cy - cut_h // 2, 0, H) bbx2 = np.clip(cx + cut_w // 2, 0, W) bby2 = np.clip(cy + cut_h // 2, 0, H) return bbx1, bby1, bbx2, bby2# 定义数据预处理data_transforms = T.Compose([ T.Resize(size=(256, 256)), T.Transpose(), # HWC -> CHW T.Normalize( mean=[0, 0, 0], # 归一化 std=[255, 255, 255], to_rgb=True) ])class JSHDataset(Dataset): def __init__(self, df, transforms, train=False): self.df = df self.transfoms = transforms self.train = train def __getitem__(self, idx): row = self.df.iloc[idx] fn = row.image # 读取图片数据 image = cv2.imread(os.path.join("data/data97595/nongzuowu/train", fn)) image = cv2.cvtColor(image, cv2.COLOR_BGR2RGB) image = cv2.resize(image, (256, 256), interpolation=cv2.INTER_LINEAR) # 读取 mask 数据 # masks = cv2.imread(os.path.join(row["mask_path"], fn), cv2.IMREAD_GRAYSCALE)/255 # masks = cv2.resize(masks, (1024, 1024), interpolation=cv2.INTER_LINEAR) # 读取 label label = paddle.zeros([4]) label[row.label] = 1 # ------------------------------ CutMix ------------------------------------------ prob = 20 # 将 prob 设置为 0 即可关闭 CutMix if random.randint(0, 99) < prob and self.train: rand_index = random.randint(0, len(self.df) - 1) rand_row = self.df.iloc[rand_index] rand_fn = rand_row.image rand_image = cv2.imread(os.path.join("data/data97595/nongzuowu/train", rand_fn)) rand_image = cv2.cvtColor(rand_image, cv2.COLOR_BGR2RGB) rand_image = cv2.resize(rand_image, (256, 256), interpolation=cv2.INTER_LINEAR) # rand_masks = cv2.imread(os.path.join(rand_row["mask_path"], rand_fn), cv2.IMREAD_GRAYSCALE)/255 # rand_masks = cv2.resize(rand_masks, (1024, 1024), interpolation=cv2.INTER_LINEAR) lam = np.random.beta(1,1) bbx1, bby1, bbx2, bby2 = rand_bbox(image.shape, lam) image[bbx1:bbx2, bby1:bby2, :] = rand_image[bbx1:bbx2, bby1:bby2, :] # masks[bbx1:bbx2, bby1:bby2] = rand_masks[bbx1:bbx2, bby1:bby2] lam = 1 - ((bbx2 - bbx1) * (bby2 - bby1) / (image.shape[1] * image.shape[0])) rand_label = paddle.zeros([4]) rand_label[rand_row.label] = 1 label = label * lam + rand_label * (1. - lam) # --------------------------------- CutMix --------------------------------------- # 应用之前我们定义的各种数据增广 # augmented = self.transforms(image=image, mask=masks) # img, mask = augmented["image"], augmented["mask"] img = image return self.transfoms(img), label def __len__(self): return len(self.df)train_dataset = JSHDataset(train_df, data_transforms, train=True)val_dataset = JSHDataset(val_df, data_transforms)#train_loadertrain_loader = paddle.io.DataLoader(train_dataset, places=paddle.CPUPlace(), batch_size=8, shuffle=True, num_workers=0)#val_loaderval_loader = paddle.io.DataLoader(val_dataset, places=paddle.CPUPlace(), batch_size=8, shuffle=True, num_workers=0)for batch_id, data in enumerate(train_loader()): x_data = data[0] y_data = data[1] print(x_data.dtype) print(y_data) breakpaddle.float32Tensor(shape=[8, 4], dtype=float32, place=CUDAPlace(0), stop_gradient=True, [[0. , 0. , 1. , 0. ], [0.54284668, 0.45715332, 0. , 0. ], [0. , 1. , 0. , 0. ], [0. , 0. , 1. , 0. ], [0.32958984, 0. , 0.67041016, 0. ], [0. , 0. , 0. , 1. ], [0. , 0. , 0. , 1. ], [0. , 0. , 0. , 1. ]])from paddle.vision.models import resnet18model = resnet18(num_classes=4)# 模型封装model = paddle.Model(model)# 定义优化器optim = paddle.optimizer.Adam(learning_rate=3e-4, parameters=model.parameters())# 配置模型model.prepare( optim, paddle.nn.CrossEntropyLoss(soft_label=True), Accuracy() )# 模型训练与评估model.fit(train_loader, val_loader, log_freq=1, epochs=2, verbose=1, )The loss value printed in the log is the current step, and the metric is the average value of previous steps.Epoch 1/2step 56/56 [==============================] - loss: 1.2033 - acc: 0.5843 - 96ms/step Eval begin...step 14/14 [==============================] - loss: 1.6905 - acc: 0.5625 - 73ms/step Eval samples: 112Epoch 2/2step 56/56 [==============================] - loss: 0.5297 - acc: 0.7708 - 82ms/step Eval begin...step 14/14 [==============================] - loss: 0.5764 - acc: 0.7857 - 67ms/step Eval samples: 112总结

在CutMix中,用另一幅图像的一部分以及第二幅图像的ground truth标记替换该切块。在图像生成过程中设置每个图像的比例(例如0.4/0.6)。在下面的图片中,你可以看到CutMix的作者是如何演示这种技术比简单的MixUp和Cutout效果更好。

ps:神经网络热力图生成可以参考我另一个项目。

这两种数据增强方式能够很好地代表了目前数据增强的一些方法,比如cutout、mosaic等方法,掌握了这两种方法,大家也就理解了另外的cutout以及mosaic增强方法。

点击关注,第一时间了解华为云新鲜技术~

关键词:

-

-

-

-

焦点日报:web: pdf_converter | DASCTF Apr.2023 X SU战队2023开局之战

题目内容这道题是给源码的,是个thinkphp项目,可以直接看看控制器就一个pdf方法,用了dompdf库,然后把用

来源: 信息:开心档之C++ STL 教程

CutMix&Mixup详解与代码实战

ASP.NET Core MVC 从入门到精通之数据库|热点聚焦

焦点日报:web: pdf_converter | DASCTF Apr.2023 X SU战队2023开局之战

只需六步!快速开启专属的风控系统

艾迪药业艾邦德 复邦德 上市发布会盛大召开 快播报

仅重998g!LG推出Gram SuperSlim笔电:10.9mm纤薄机身

锐龙R9-7945HX游戏本实测:性能恐怖 渲染能力媲美桌面版-环球快看

国产芯片新突破!龙芯3A5000成功应用于3D打印|焦点热门

最资讯丨画二次元画首先学什么,南京二次元画哪里可以学

全球速讯:饼状图的优缺点,你真的了解吗?

高保真智能录音机解决方案技术特色解析 当前要闻

使用ethtool排查网卡速率问题 世界动态

海大集团接待AllianzGlobal等多家机构调研|全球时快讯

谷歌创始人大量抛售特斯拉股票:曾被曝马斯克绿了他 今日讯

光刻机订单占了30% ASML喊话:绝对不能失去中国市场_天天头条

.NET使用一行命令轻松生成EF Core项目框架

环球精选!【解决方法】正常游览Flash页面,解决主流游览器的不支持问题(如Edge,Firefox)

今日快讯:小米盒子怎么看电视直播_小米盒子看电视直播的方法

男子聊天界面投屏广场成大型社死现场:全网都知道他不回老婆微信了 重点聚焦

每日精选:首例涉“虚拟数字人”侵权案宣判:被告判消除影响并赔12万

每日动态!89英寸三星MICRO LED电视全球首秀:RGB无机自发光、支持音画追踪

研究所预测:2070年日本总人口将降至8700万-每日时讯

华为88W充电器A/C口二选一引争议 华为李小龙:对用户最友好的设计_快资讯

世界快报:小小善举 温暖一座城!搀扶残障人士过马路的暖心司机找到了

每日快播:CloudCanal x OceanBase 数据迁移同步优化

JS中的promise返回的resolve()和reject()的理解附代码展示

韩媒:拜登向尹锡悦推荐“零度可乐”,韩网友嘲讽“给你的也只有零”_全球热闻

苹果手机黑屏但是有声音是怎么回事?苹果手机黑屏但是有声音怎么解决?

联想k860什么时候上市的?联想k860手机参数

焦点日报:马斯克疯狂降价!特斯拉Model Y已比美国新车平均价还便宜

环球看热讯:人工智能“走出”电脑!Spot机械狗成功集成ChatGPT

国产最强骁龙8 Gen2折叠屏!vivo X Fold2明天首销:8999元起

友商被苹果干趴 华为撑起国货尊严:一季度销量暴涨41%!没5G依然强-新资讯

求职者嫌8000工资高要求降到2000:HR信以为真改标准 世界今热点

电脑k歌需要什么设备?电脑k歌软件哪个好用?

2022中国男排大名单是什么?中国男排联赛2022年赛程

麦当劳被中国买下了吗?金拱门为什么还叫麦当劳?

三星堆是哪个朝代的?三星堆文明是什么民族?

佳禾智能:2022年净利润续创新高,一季度业绩强势增长|视点

为什么很多人喜欢罗永浩?罗永浩创立的手机品牌是什么?

明日方舟六星强度榜是什么?明日方舟真正的三幻神

街头霸王哪个人物最厉害?12人街霸人物原型

中国十大导演有哪些?中国内地四大导演排名

数栈V6.0全新产品矩阵发布,数据底座 EasyMR 焕新升级|世界快消息

天天热门:高清录音机市场需求调研分析

python-docx对替换后的文字设置英文字体、中文字体、字号大小、对齐方式-焦点短讯

天天短讯!东西问丨马特博博耶夫:明铁佩古城遗址为何被称为“丝绸之路活化石”?

每日热议!爱美客(300896):1Q净利润高增 厚积薄发增长势头足

上海大力建设“墨水屏”公交站牌 今年五个新城覆盖率超50%

真能横着开!现代摩比斯“e-Corner”实车演示:再不怕侧方停车了_环球即时看

王健林抛弃万达汽车

天天亮点!首发9999元 惠普暗影精灵9 SLIM上架:i9+ RTX 4060

脚感软弹 静音防滑:三福EVA洞洞拖鞋14.1元大促(原价29元) 天天速读

央行今日实现净投放590亿元

VueRouter 天天微头条

全球新消息丨WPF教程_编程入门自学教程_菜鸟教程-免费教程分享

基于Java开发支持全文检索、工作流审批、知识图谱的应用系统

前端跨域解决方案——CORS

【环球播资讯】湖北宜昌三年改造367座危桥

天天即时看!丰田:智能不是堆砌功能 制造让中国人感到喜悦的汽车

今亮点!真我11系列外观首秀:荔枝纹素皮、金色圆环抢眼

强过骁龙8 Gen3!iQOO Neo8 Pro首发天玑9200+稳了_全球热点评

全球百事通!工信部:尽快明确2023年后车购税减免政策

曾红极一时的天涯社区已无法打开 消息称欠了千万元服务器费_全球今日讯

【单例设计模式原理详解】Java/JS/Go/Python/TS不同语言实现

【世界新要闻】国防部新闻发言人谭克非就中国军队派军舰紧急撤离我在苏丹人员发表谈话

今日快看!【财经分析】深圳市4月地方债定价连续“换锚” 市场化发行水平不断提升

大熊猫丫丫启程画面曝光 网友哽咽:一路平安

5999元起!小米13 Ultra改变了米粉不爱拍照的习惯 全球速讯

全球播报:暗黑预售推动暴雪营收大幅增长

世界速递!超讯通信:2022年度净利润约1519万元

学习Linux,你提上日程了吗?

“五一”出游高峰将至 各地文旅部门多举措“迎考”

【国际大宗商品早报】国际油价显著下跌 基本金属多数上涨

郑和下西洋最远到达了哪里_郑和下西洋最远的地方|每日精选

环球短讯!小米14曝光:采用华星屏幕 边框比iPhone 14 Pro更窄

涨价到2万买吗?苹果iPhone 15系列最新渲染图来了:有USB-C接口、更圆润

iPhone、Win11正式打通:能在电脑上接打电话、收发信息了|天天速递

学系统集成项目管理工程师(中项)系列13a_人力资源管理(上)

5月26上映!《小美人鱼》发新海报 黑小美人鱼化身精灵展绝美瞬间

天天要闻:中国红牛十问泰国天丝:原版红牛凭啥在中国获取近百亿的利益?

4月26日基金净值:国寿安保稳鑫一年持有混合A最新净值1.0035,跌0.59%_热闻

焦点!浅谈errgroup的使用以及源码分析

焦点短讯!Blazor UI库 Bootstrap Blazor 快速上手 (v7.5.7)

关注:使用youtube-dl和yt-dlp下载视频!

头条:4月26日基金净值:嘉实农业产业股票A最新净值1.8912,涨0.34%

世界新资讯:华为真的很懂女人:前置拍照 媲美后置

神秘技艺挑战“精准刀法”!RTX3070性能大增的原因是什么?

电车充电的速度 就要赶上油车加油了_每日快看

感受下真正全国产的服务器、PC!能硬 也能软

【天天报资讯】Intel显卡多了一个大品牌!41年历史的旌宇

全球快资讯:人气流失!跟队:切尔西主场出现空座,这情况太罕见了

今日热议:“不动产统一登记”引发房产税热议,上海试点12年效果如何?

燃气灶自动熄火原因和处理方法图解(燃气灶自动熄火原因和处理方法)

09 管理内存对象|焦点讯息

沃尔沃最安全纯电动EX90发布!“我们会的新势力10年都学不会”

环球滚动:大熊猫丫丫已启程回国:专机飞往上海 明天抵达浦东机场

陈凯歌与倪萍结过婚吗 陈凯歌与倪萍有子女吗

关于在linux中使用tcpdump命令进行简单的抓包操作