最新要闻

- 每日快看:攀升年底大促!16GB轻薄本仅1698元

- 【天天报资讯】拆分数据库变相涨价!知网滥用市场支配地位被罚8760万元

- 焦点播报:神了!1919年一幅漫画预言了手机 这六个场景太真实

- 今日最新!蚊子变疫苗:中科院新研究可以从源头抑制新发传染病

- 视点!网友青岛莱西湖附近偶遇老虎 官方回应:已捉回、未造成伤害

- 李斌再度回应蔚来数据泄露 公司赔破产也不会妥协

- 【天天新视野】7.4GB/s速度 忆联发布数据中心级SSD:ES.3版容量超15TB

- 你期待谁?兔年春晚首次大联排引围观 沈腾马丽等明星现身:网友兴奋

- 奥运冠军还是学霸!谷爱凌晒斯坦福大学首份成绩单:全科满分

- 每日精选:续航200km有快充 吉利熊猫mini新春版官图发布:妹子最爱

- 重拾低价策略!京东善做PPT、说假大空话的高管被刘强东拿下:就是骗子

- “史诗级”暴风雪吹乱北美圣诞:40年最冷!节日档期票房泡汤

- 当前快报:频放生、卖鳄雀鳝!违法放生、丢弃外来入侵物种将被严惩 网友赞

- 全球简讯:云南女子取快递发现包裹长菌子:感觉很神奇

- 著名动画艺术家严定宪去世 作品有《大闹天宫》《哪吒闹海》等

- 没sei了!父亲哄4岁女儿签20年不交男友合约 网友乐翻

手机

iphone11大小尺寸是多少?苹果iPhone11和iPhone13的区别是什么?

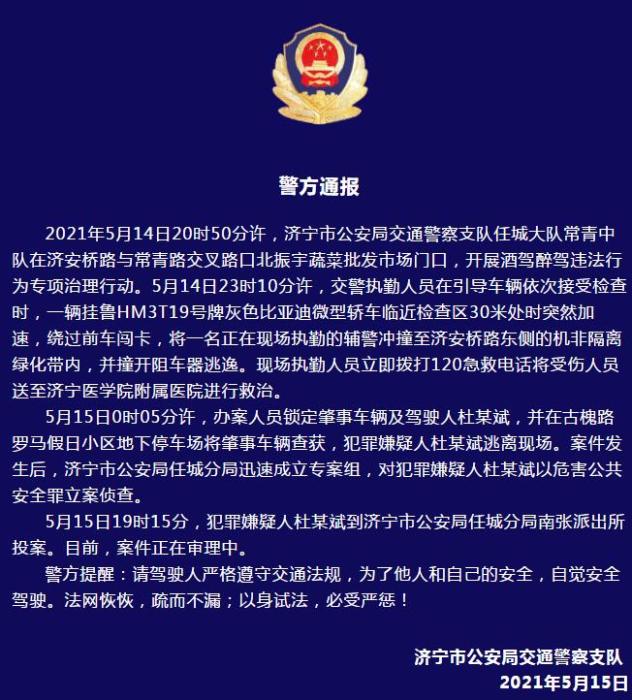

警方通报辅警执法直播中被撞飞:犯罪嫌疑人已投案

- iphone11大小尺寸是多少?苹果iPhone11和iPhone13的区别是什么?

- 警方通报辅警执法直播中被撞飞:犯罪嫌疑人已投案

- 男子被关545天申国赔:获赔18万多 驳回精神抚慰金

- 3天内26名本土感染者,辽宁确诊人数已超安徽

- 广西柳州一男子因纠纷杀害三人后自首

- 洱海坠机4名机组人员被批准为烈士 数千干部群众悼念

家电

Kubernetes监控手册04-监控Kube-Proxy

简介

首先,请阅读文章《Kubernetes监控手册01-体系介绍》,回顾一下 Kubernetes 架构,Kube-Proxy 是在所有工作负载节点上的。

Kube-Proxy 默认暴露两个端口,10249用于暴露监控指标,在/metrics接口吐出 Prometheus 协议的监控数据:

(资料图片仅供参考)

(资料图片仅供参考)

[root@tt-fc-dev01.nj lib]# curl -s http://localhost:10249/metrics | head -n 10# HELP apiserver_audit_event_total [ALPHA] Counter of audit events generated and sent to the audit backend.# TYPE apiserver_audit_event_total counterapiserver_audit_event_total 0# HELP apiserver_audit_requests_rejected_total [ALPHA] Counter of apiserver requests rejected due to an error in audit logging backend.# TYPE apiserver_audit_requests_rejected_total counterapiserver_audit_requests_rejected_total 0# HELP go_gc_duration_seconds A summary of the pause duration of garbage collection cycles.# TYPE go_gc_duration_seconds summarygo_gc_duration_seconds{quantile="0"} 2.5307e-05go_gc_duration_seconds{quantile="0.25"} 2.8884e-0510256 端口作为健康检查的端口,使用/healthz接口做健康检查,请求之后返回两个时间信息:

[root@tt-fc-dev01.nj lib]# curl -s http://localhost:10256/healthz | jq .{ "lastUpdated": "2022-11-09 13:14:35.621317865 +0800 CST m=+4802354.950616250", "currentTime": "2022-11-09 13:14:35.621317865 +0800 CST m=+4802354.950616250"}所以,我们只要从http://localhost:10249/metrics采集监控数据即可。既然是 Prometheus 协议的数据,使用 Categraf 的 input.prometheus 来搞定即可。

Categraf prometheus 插件

配置文件在conf/input.prometheus/prometheus.toml,把 Kube-Proxy 的地址配置进来即可:

interval = 15[[instances]]urls = [ "http://localhost:10249/metrics"]labels = { job="kube-proxy" }urls 字段配置 endpoint 列表,即所有提供 metrics 数据的接口,我们使用下面的命令做个测试:

[work@tt-fc-dev01.nj categraf]$ ./categraf --test --inputs prometheus | grep kubeproxy_sync_proxy_rules2022/11/09 13:30:17 main.go:110: I! runner.binarydir: /home/work/go/src/categraf2022/11/09 13:30:17 main.go:111: I! runner.hostname: tt-fc-dev01.nj2022/11/09 13:30:17 main.go:112: I! runner.fd_limits: (soft=655360, hard=655360)2022/11/09 13:30:17 main.go:113: I! runner.vm_limits: (soft=unlimited, hard=unlimited)2022/11/09 13:30:17 config.go:33: I! tracing disabled2022/11/09 13:30:17 provider.go:63: I! use input provider: [local]2022/11/09 13:30:17 agent.go:87: I! agent starting2022/11/09 13:30:17 metrics_agent.go:93: I! input: local.prometheus started2022/11/09 13:30:17 prometheus_scrape.go:14: I! prometheus scraping disabled!2022/11/09 13:30:17 agent.go:98: I! agent started13:30:17 kubeproxy_sync_proxy_rules_endpoint_changes_pending agent_hostname=tt-fc-dev01.nj instance=http://localhost:10249/metrics 013:30:17 kubeproxy_sync_proxy_rules_duration_seconds_count agent_hostname=tt-fc-dev01.nj instance=http://localhost:10249/metrics 31978613:30:17 kubeproxy_sync_proxy_rules_duration_seconds_sum agent_hostname=tt-fc-dev01.nj instance=http://localhost:10249/metrics 17652.74991190921413:30:17 kubeproxy_sync_proxy_rules_duration_seconds_bucket agent_hostname=tt-fc-dev01.nj instance=http://localhost:10249/metrics le=+Inf 31978613:30:17 kubeproxy_sync_proxy_rules_duration_seconds_bucket agent_hostname=tt-fc-dev01.nj instance=http://localhost:10249/metrics le=0.001 013:30:17 kubeproxy_sync_proxy_rules_duration_seconds_bucket agent_hostname=tt-fc-dev01.nj instance=http://localhost:10249/metrics le=0.002 013:30:17 kubeproxy_sync_proxy_rules_duration_seconds_bucket agent_hostname=tt-fc-dev01.nj instance=http://localhost:10249/metrics le=0.004 013:30:17 kubeproxy_sync_proxy_rules_duration_seconds_bucket agent_hostname=tt-fc-dev01.nj instance=http://localhost:10249/metrics le=0.008 013:30:17 kubeproxy_sync_proxy_rules_duration_seconds_bucket agent_hostname=tt-fc-dev01.nj instance=http://localhost:10249/metrics le=0.016 013:30:17 kubeproxy_sync_proxy_rules_duration_seconds_bucket agent_hostname=tt-fc-dev01.nj instance=http://localhost:10249/metrics le=0.032 013:30:17 kubeproxy_sync_proxy_rules_duration_seconds_bucket agent_hostname=tt-fc-dev01.nj instance=http://localhost:10249/metrics le=0.064 27481513:30:17 kubeproxy_sync_proxy_rules_duration_seconds_bucket agent_hostname=tt-fc-dev01.nj instance=http://localhost:10249/metrics le=0.128 31661613:30:17 kubeproxy_sync_proxy_rules_duration_seconds_bucket agent_hostname=tt-fc-dev01.nj instance=http://localhost:10249/metrics le=0.256 31952513:30:17 kubeproxy_sync_proxy_rules_duration_seconds_bucket agent_hostname=tt-fc-dev01.nj instance=http://localhost:10249/metrics le=0.512 31977613:30:17 kubeproxy_sync_proxy_rules_duration_seconds_bucket agent_hostname=tt-fc-dev01.nj instance=http://localhost:10249/metrics le=1.024 31978413:30:17 kubeproxy_sync_proxy_rules_duration_seconds_bucket agent_hostname=tt-fc-dev01.nj instance=http://localhost:10249/metrics le=2.048 31978413:30:17 kubeproxy_sync_proxy_rules_duration_seconds_bucket agent_hostname=tt-fc-dev01.nj instance=http://localhost:10249/metrics le=4.096 31978413:30:17 kubeproxy_sync_proxy_rules_duration_seconds_bucket agent_hostname=tt-fc-dev01.nj instance=http://localhost:10249/metrics le=8.192 31978413:30:17 kubeproxy_sync_proxy_rules_duration_seconds_bucket agent_hostname=tt-fc-dev01.nj instance=http://localhost:10249/metrics le=16.384 31978613:30:17 kubeproxy_sync_proxy_rules_service_changes_pending agent_hostname=tt-fc-dev01.nj instance=http://localhost:10249/metrics 013:30:17 kubeproxy_sync_proxy_rules_last_queued_timestamp_seconds agent_hostname=tt-fc-dev01.nj instance=http://localhost:10249/metrics 1.6668536394083393e+0913:30:17 kubeproxy_sync_proxy_rules_iptables_restore_failures_total agent_hostname=tt-fc-dev01.nj instance=http://localhost:10249/metrics 013:30:17 kubeproxy_sync_proxy_rules_endpoint_changes_total agent_hostname=tt-fc-dev01.nj instance=http://localhost:10249/metrics 21913913:30:17 kubeproxy_sync_proxy_rules_last_timestamp_seconds agent_hostname=tt-fc-dev01.nj instance=http://localhost:10249/metrics 1.6679718066295934e+0913:30:17 kubeproxy_sync_proxy_rules_service_changes_total agent_hostname=tt-fc-dev01.nj instance=http://localhost:10249/metrics 512372Kube-Proxy 在 Kubernetes 架构中,负责从 APIServer 同步规则,然后修改 iptables 或 ipvs 配置,同步规则相关的指标就非常关键了,这里我就 grep 了这些指标作为样例。

通过--test看到输出了,就说明正常采集到数据了,你有几个工作负载节点,就分别去修改 Categraf 的配置即可。当然,这样做非常直观,只是略麻烦,如果未来扩容新的 Node 节点,也要去修改 Categraf 的采集配置,把 Kube-Proxy 这个/metrics地址给加上,如果你是用脚本批量跑的,倒是还可以,如果是手工部署就略麻烦。我们可以把 Categraf 采集器做成 Daemonset,这样就不用担心扩容的问题了,Daemonset 会被自动调度到所有 Node 节点。

Categraf 作为 Daemonset 部署

Categraf 作为 Daemonset 运行,首先要创建一个 namespace,然后相关的 ConfigMap、Daemonset 等都归属这个 namespace。只是监控 Kube-Proxy 的话,Categraf 的配置就只需要主配置 config.toml 和 prometheus.toml,下面我们就实操演示一下。

创建 namespace

[work@tt-fc-dev01.nj categraf]$ kubectl create namespace flashcatnamespace/flashcat created[work@tt-fc-dev01.nj categraf]$ kubectl get ns | grep flashcatflashcat Active 29s创建 ConfigMap

ConfigMap 是用于放置 config.toml 和 prometheus.toml 的内容,我把 yaml 文件也给你准备好了,请保存为 categraf-configmap-v1.yaml

---kind: ConfigMapmetadata: name: categraf-configapiVersion: v1data: config.toml: | [global] hostname = "$HOSTNAME" interval = 15 providers = ["local"] [writer_opt] batch = 2000 chan_size = 10000 [[writers]] url = "http://10.206.0.16:19000/prometheus/v1/write" timeout = 5000 dial_timeout = 2500 max_idle_conns_per_host = 100 ---kind: ConfigMapmetadata: name: categraf-input-prometheusapiVersion: v1data: prometheus.toml: | [[instances]] urls = ["http://127.0.0.1:10249/metrics"] labels = { job="kube-proxy" } 上面的10.206.0.16:19000只是举个例子,请改成你自己的 n9e-server 的地址。当然,如果不想把监控数据推给 Nightingale 也OK,写成其他的时序库(支持 remote write 协议的接口)也可以。hostname = "$HOSTNAME"这个配置用了$符号,后面创建 Daemonset 的时候会把 HOSTNAME 这个环境变量注入,让 Categraf 自动拿到。

下面我们把 ConfigMap 创建出来:

[work@tt-fc-dev01.nj yamls]$ kubectl apply -f categraf-configmap-v1.yaml -n flashcatconfigmap/categraf-config createdconfigmap/categraf-input-prometheus created[work@tt-fc-dev01.nj yamls]$ kubectl get configmap -n flashcatNAME DATA AGEcategraf-config 1 19scategraf-input-prometheus 1 19skube-root-ca.crt 1 22m创建 Daemonset

配置文件准备好了,开始创建 Daemonset,注意把 HOSTNAME 环境变量注入进去,yaml 文件如下,你可以保存为 categraf-daemonset-v1.yaml:

apiVersion: apps/v1kind: DaemonSetmetadata: labels: app: categraf-daemonset name: categraf-daemonsetspec: selector: matchLabels: app: categraf-daemonset template: metadata: labels: app: categraf-daemonset spec: containers: - env: - name: TZ value: Asia/Shanghai - name: HOSTNAME valueFrom: fieldRef: apiVersion: v1 fieldPath: spec.nodeName - name: HOSTIP valueFrom: fieldRef: apiVersion: v1 fieldPath: status.hostIP image: flashcatcloud/categraf:v0.2.18 imagePullPolicy: IfNotPresent name: categraf volumeMounts: - mountPath: /etc/categraf/conf name: categraf-config - mountPath: /etc/categraf/conf/input.prometheus name: categraf-input-prometheus hostNetwork: true restartPolicy: Always tolerations: - effect: NoSchedule operator: Exists volumes: - configMap: name: categraf-config name: categraf-config - configMap: name: categraf-input-prometheus name: categraf-input-prometheusapply 一下这个 Daemonset 文件:

[work@tt-fc-dev01.nj yamls]$ kubectl apply -f categraf-daemonset-v1.yaml -n flashcatdaemonset.apps/categraf-daemonset created[work@tt-fc-dev01.nj yamls]$ kubectl get ds -o wide -n flashcatNAME DESIRED CURRENT READY UP-TO-DATE AVAILABLE NODE SELECTOR AGE CONTAINERS IMAGES SELECTORcategraf-daemonset 6 6 6 6 6 2m20s categraf flashcatcloud/categraf:v0.2.17 app=categraf-daemonset[work@tt-fc-dev01.nj yamls]$ kubectl get pods -o wide -n flashcatNAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATEScategraf-daemonset-4qlt9 1/1 Running 0 2m10s 10.206.0.7 10.206.0.7 categraf-daemonset-s9bk2 1/1 Running 0 2m10s 10.206.0.11 10.206.0.11 categraf-daemonset-w77lt 1/1 Running 0 2m10s 10.206.16.3 10.206.16.3 categraf-daemonset-xgwf5 1/1 Running 0 2m10s 10.206.0.16 10.206.0.16 categraf-daemonset-z9rk5 1/1 Running 0 2m10s 10.206.16.8 10.206.16.8 categraf-daemonset-zdp8v 1/1 Running 0 2m10s 10.206.0.17 10.206.0.17 看起来一切正常,我们去 Nightingale 查一下相关监控指标,看看有了没有:

监控指标说明

Kube-Proxy 的指标,孔飞老师之前整理过,我也给挪到这个章节,供大家参考:

# HELP go_gc_duration_seconds A summary of the pause duration of garbage collection cycles.# TYPE go_gc_duration_seconds summarygc时间# HELP go_goroutines Number of goroutines that currently exist.# TYPE go_goroutines gaugegoroutine数量# HELP go_threads Number of OS threads created.# TYPE go_threads gauge线程数量# HELP kubeproxy_network_programming_duration_seconds [ALPHA] In Cluster Network Programming Latency in seconds# TYPE kubeproxy_network_programming_duration_seconds histogramservice或者pod发生变化到kube-proxy规则同步完成时间指标含义较复杂,参照https://github.com/kubernetes/community/blob/master/sig-scalability/slos/network_programming_latency.md# HELP kubeproxy_sync_proxy_rules_duration_seconds [ALPHA] SyncProxyRules latency in seconds# TYPE kubeproxy_sync_proxy_rules_duration_seconds histogram规则同步耗时# HELP kubeproxy_sync_proxy_rules_endpoint_changes_pending [ALPHA] Pending proxy rules Endpoint changes# TYPE kubeproxy_sync_proxy_rules_endpoint_changes_pending gaugeendpoint 发生变化后规则同步pending的次数# HELP kubeproxy_sync_proxy_rules_endpoint_changes_total [ALPHA] Cumulative proxy rules Endpoint changes# TYPE kubeproxy_sync_proxy_rules_endpoint_changes_total counterendpoint 发生变化后规则同步的总次数# HELP kubeproxy_sync_proxy_rules_iptables_restore_failures_total [ALPHA] Cumulative proxy iptables restore failures# TYPE kubeproxy_sync_proxy_rules_iptables_restore_failures_total counter本机上 iptables restore 失败的总次数# HELP kubeproxy_sync_proxy_rules_last_queued_timestamp_seconds [ALPHA] The last time a sync of proxy rules was queued# TYPE kubeproxy_sync_proxy_rules_last_queued_timestamp_seconds gauge最近一次规则同步的请求时间戳,如果比下一个指标 kubeproxy_sync_proxy_rules_last_timestamp_seconds 大很多,那说明同步 hung 住了# HELP kubeproxy_sync_proxy_rules_last_timestamp_seconds [ALPHA] The last time proxy rules were successfully synced# TYPE kubeproxy_sync_proxy_rules_last_timestamp_seconds gauge最近一次规则同步的完成时间戳# HELP kubeproxy_sync_proxy_rules_service_changes_pending [ALPHA] Pending proxy rules Service changes# TYPE kubeproxy_sync_proxy_rules_service_changes_pending gaugeservice变化引起的规则同步pending数量# HELP kubeproxy_sync_proxy_rules_service_changes_total [ALPHA] Cumulative proxy rules Service changes# TYPE kubeproxy_sync_proxy_rules_service_changes_total counterservice变化引起的规则同步总数# HELP process_cpu_seconds_total Total user and system CPU time spent in seconds.# TYPE process_cpu_seconds_total counter利用这个指标统计cpu使用率# HELP process_max_fds Maximum number of open file descriptors.# TYPE process_max_fds gauge进程可以打开的最大fd数# HELP process_open_fds Number of open file descriptors.# TYPE process_open_fds gauge进程当前打开的fd数# HELP process_resident_memory_bytes Resident memory size in bytes.# TYPE process_resident_memory_bytes gauge统计内存使用大小# HELP process_start_time_seconds Start time of the process since unix epoch in seconds.# TYPE process_start_time_seconds gauge进程启动时间戳# HELP rest_client_request_duration_seconds [ALPHA] Request latency in seconds. Broken down by verb and URL.# TYPE rest_client_request_duration_seconds histogram请求 apiserver 的耗时(按照url和verb统计)# HELP rest_client_requests_total [ALPHA] Number of HTTP requests, partitioned by status code, method, and host.# TYPE rest_client_requests_total counter请求 apiserver 的总数(按照code method host统计)导入监控大盘

由于上面给出的监控方案是通过 Daemonset,所以各个 Kube-Proxy 的监控数据,是通过 ident 标签来区分的,并非是通过 instance 标签来区分,从 Grafana 官网找到一个分享,地址在这里,改造之后的大盘在这里导入夜莺即可使用。

相关文章

- Kubernetes监控手册01-体系介绍

- Kubernetes监控手册02-宿主监控概述

- Kubernetes监控手册03-宿主监控实操

关于作者

本文作者秦晓辉,快猫星云合伙人,文章内容是快猫技术团队共同沉淀的结晶,作者做了编辑整理,我们会持续输出监控、稳定性保障相关的技术文章,文章可转载,转载请注明出处,尊重技术人员的成果。

-

Kubernetes监控手册04-监控Kube-Proxy

简介首先,请阅读文章《Kubernetes监控手册01-体系介绍》,回顾一下Kubernetes架构,Kube-Proxy是在所有...

来源: -

-

-

Kubernetes监控手册04-监控Kube-Proxy

天天微头条丨Zabbix技术分享——snmp异常排查指南

对不起,你做的 A/B 实验都是错的——火山引擎 DataTester 科普

当前消息!与时代共命运:数智时代的到来意味着什么?

AcWing1144. 连接格点

每日快看:攀升年底大促!16GB轻薄本仅1698元

【天天报资讯】拆分数据库变相涨价!知网滥用市场支配地位被罚8760万元

焦点播报:神了!1919年一幅漫画预言了手机 这六个场景太真实

今日最新!蚊子变疫苗:中科院新研究可以从源头抑制新发传染病

视点!网友青岛莱西湖附近偶遇老虎 官方回应:已捉回、未造成伤害

全球速递!项目播报 | 方正璞华×连森电子,打造电子材料行业PLM系统的新标杆!

今日快看!Python爬虫学习:Cookie 和 Session 的区别是什么?

环球短讯!Python模块学习,模块是,什么

全球观速讯丨飞项三招教你用协同工具杜绝远程办公“摸鱼”

XYplorer使用教程

李斌再度回应蔚来数据泄露 公司赔破产也不会妥协

【天天新视野】7.4GB/s速度 忆联发布数据中心级SSD:ES.3版容量超15TB

你期待谁?兔年春晚首次大联排引围观 沈腾马丽等明星现身:网友兴奋

奥运冠军还是学霸!谷爱凌晒斯坦福大学首份成绩单:全科满分

每日精选:续航200km有快充 吉利熊猫mini新春版官图发布:妹子最爱

【播资讯】Jaeger&ElasticSearch存储链路追踪数据

环球聚焦:开源漏洞数量增长33%!企业安全债务不堪重负丨行业数据

重拾低价策略!京东善做PPT、说假大空话的高管被刘强东拿下:就是骗子

“史诗级”暴风雪吹乱北美圣诞:40年最冷!节日档期票房泡汤

当前快报:频放生、卖鳄雀鳝!违法放生、丢弃外来入侵物种将被严惩 网友赞

全球简讯:云南女子取快递发现包裹长菌子:感觉很神奇

著名动画艺术家严定宪去世 作品有《大闹天宫》《哪吒闹海》等

天天百事通!【年终总结】求职面试一定要扬长避短

没sei了!父亲哄4岁女儿签20年不交男友合约 网友乐翻

智己汽车:一手好牌打得稀烂

全球看热讯:AcWing244.谜一样的牛

6旬大爷花40年收藏670辆老爷车:耗资4亿元 最爱这品牌

环球视点!美国肯尼迪国际机场一客机发生火情:致5人受伤

天天视讯!男子喂海鸥 头发被当成鸟窝不停被啄

新资讯:接到推销电话后男子“神操作”获赔500元 网友:学到了

环球焦点!真心刹不住 实拍火车撞上货车:动能太强、瞬间脱轨

349元 小米推出首款米家智能鱼缸:半年免换水、支持远程投食

世界快资讯丨欧洲电价有多离谱?开电车比开油车还要贵 对比国内差距大

天天新资讯:广州热门景区重现“人山人海”:订票量猛增

环球今热点:Redmi K60 Pro官宣搭载小米影像大脑2.0:小米迄今最好的影像技术

专家狠批算法推荐:看短视频频率影响未成年人价值取向 毒害太大

新世界铁林是好人还是坏人?新世界铁林的结局是什么?

盲目从众是什么意思?盲目从众的危害有哪些?

江西四大摇篮是什么意思?江西四大摇篮分别是哪里?

明治维新的性质是什么?明治维新的性质和影响是什么?

天天新动态:AcWing291.蒙德里安的梦想题解

世界热推荐:从零演示如何基于 IDL 方式来定义 Dubbo 服务并使用 Triple 协议

全球动态:springboot 连接不上 redis 的三种解决方案!

瑰石装备可以账号共享吗?瑰石的功效与作用是什么?

古代后宫妃子怎么称呼?古代后宫妃子等级排序

过春节的国家有哪些?和我国同一天过春节的国家有哪些?

比特率是什么意思?比特率一般设置多少才算最好?

长篇小说一般多少字?2022年长篇小说排行榜前十名

付款方式无效怎么解决?付款方式有哪些?

冰箱冷藏室多少温度合适?冰箱冷藏室有水是怎么回事?

洗衣机不脱水了是怎么回事?洗衣机不脱水怎么解决?

淘宝换货流程怎么操作?淘宝换货流程注意事项有哪些?

空调模式有哪几种?空调模式三个水滴是什么意思?

ifox是什么格式的文件?ifox文件格式怎么打开?

每日看点!班尼路双旦大促:羽绒/棉服99元、牛仔/加绒裤49元起清仓

满血性能铁三角!一加11搭载LPDDR5X+UFS 4.0:直接12GB内存起步

热点评!株洲国际赛车场现卡丁车严重事故:车手被曝已身亡

K系列最强影像系统!Redmi K60 Pro搭载IMX800旗舰传感器

环球简讯:女子醉驾被查现场狂飙演技:我心脏不好、为什么如此对我

【脚本项目源码】Python制作提升成功率90%的表白神器

刘强东:没离开过

百公里最多节油1升 五菱推出超级省油模式:星辰混动率先用上

环球热消息:GS5还是PS5?国外网友晒奇葩“山寨”商品

环球热头条丨当大厂程序员已开始用AI写代码 人类会被AIGC淘汰吗?

【环球新视野】2023年旗舰焊门员!Redmi K60 Pro卖2999不可能了

《阿凡达:水之道》全球票房破8亿美元:口碑仍下滑 20亿美元才能回本

【播资讯】5.3分口碑扑街的好莱坞大烂片《真人快打》:要拍续集了

今日报丨库存严重还是别的?特斯拉上海工厂被曝已停产 官方正式回应不完全准确

【全球时快讯】比燃油车更污染!丰田CEO吐槽新能源车愚蠢后:用户最想要的是混动

天天观点:Epic喜加一!《死亡搁浅》免费送:立省88元

天天即时看!100小时!全球首架C919今日验证飞行:最快2023年春商业运营

【天天时快讯】常见sql攻击学习

全球视讯!在同一台主机启动多个FreeSWITCH实例

世界看点:谁能想到?人类历史上第一次抵抗AI:居然会发生在艺术圈

焦点日报:Java开发学习(四十七)----MyBatisPlus删除语句之多记录操作

全球消息!【踩坑】Debian编译安装Podman和Prometheus-podman-exporter

全球信息:我国多省雨雪上线!北方局地降温超8℃

第三章 --------------------XAML的属性和事件

AcWing.7 混合背包问题

每日快讯!统一异常处理——BlockException

环球快资讯丨Codeforces 983 D Arkady and Rectangles 题解

全球今热点:功耗只有65W!14核心i5-13500偷跑:解锁到154W太生猛了

全球视点!教你用JavaScript实现调皮的字母

【新视野】PHP Composer 虚拟依赖包 - 实现按需载入钉钉对应功能模块的 php sdk

全球新动态:Safari浏览器对SVG中的

标签支持不友好,渲染容易错位 【环球热闻】实拍日本多地暴雪:1.6米高积雪掩埋车辆、14人死亡

Redmi Buds 4青春版耳机亮相:单耳轻至3.9g 活力4色

环球快资讯:《阿凡达2》中的“海怪”:原来真的存在于地球!

AcWing241. 楼兰图腾

天天速递!CentOS7配置静态IP

天天快消息!男生考研分到高中母校被班主任监考:网友一句话扎心

天天信息:山东小伙用1个月微缩30倍农村老屋:惟妙惟肖

MAUI新生6.1-Shell导航视觉层次结构

每日播报!2022年优化最差游戏榜单出炉:《巫师3》次世代版上榜

世界通讯!能跑虚幻5特效!Centerpiece机械键盘自带CPU+GPU