最新要闻

- 天天亮点!百余名驻澳门部队官兵无偿献血

- 发射6枚火箭后 马斯克SpaceX的劲敌维珍轨道倒了:已申请破产

- 健康低脂 鲜嫩多汁:肌肉小王子即食鸡胸肉10袋19.9元

- iPhone自带天气应用崩了 苹果客服:没收到反馈 重启或升级试试

- 每日精选:力压美国!全球AI论文发表量前十机构:九所来自中国

- 焦点热门:电视剧《他是谁》收官!聂宝华下线了

- 每日信息:人民日报发声后!中央政法委将严查!刘国梁危险

- 世界观热点:杭州小伙高速开特斯拉 “自动驾驶”变“自动撞车”

- 全球热消息:GPT-4学会“自我反思”:测试表现提升达30%

- 世界视讯!酷睿独享大小核架构 至强CPU不会混搭:Intel解释原因

- 电视画质新高度 乐视发布85寸新品“让影像狂飙”

- 北京环球影城回应不让摄影师进:不允许商业旅拍 个人可以

- 每日热文:市应急管理局开展地震监测台站巡查工作

- 环球快报:“蜀道电行者”打好森林防火“组合拳”

- 快资讯:日媒:后锂离子电池时代竞争 中国碾压式领先日本、美国

- 全球今日讯!279元大额券:杰士邦零感003系列18枚30.9元狂促

广告

手机

iphone11大小尺寸是多少?苹果iPhone11和iPhone13的区别是什么?

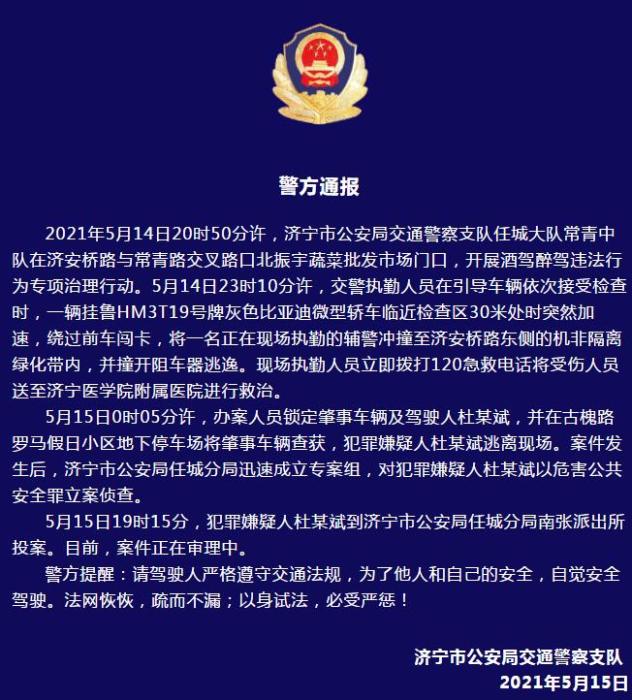

警方通报辅警执法直播中被撞飞:犯罪嫌疑人已投案

- iphone11大小尺寸是多少?苹果iPhone11和iPhone13的区别是什么?

- 警方通报辅警执法直播中被撞飞:犯罪嫌疑人已投案

- 男子被关545天申国赔:获赔18万多 驳回精神抚慰金

- 3天内26名本土感染者,辽宁确诊人数已超安徽

- 广西柳州一男子因纠纷杀害三人后自首

- 洱海坠机4名机组人员被批准为烈士 数千干部群众悼念

家电

视焦点讯!Python数据分析第七周作业随笔记录

(资料图片仅供参考)

(资料图片仅供参考)

电商产品评论数据情感分析

代码1:评论去重的代码

# 代码12-1 评论去重的代码import pandas as pdimport reimport jieba.posseg as psgimport numpy as np# 去重,去除完全重复的数据reviews = pd.read_csv("D:\\360MoveData\\Users\\86130\\Documents\\Tencent Files\\2268756693\\FileRecv\\reviews.csv")reviews = reviews[["content", "content_type"]].drop_duplicates()content = reviews["content"]代码2:数据清洗

# 代码12-2 数据清洗# 去除去除英文、数字等# 由于评论主要为京东美的电热水器的评论,因此去除这些词语strinfo = re.compile("[0-9a-zA-Z]|京东|美的|电热水器|热水器|")content = content.apply(lambda x: strinfo.sub("", x))代码3:分词、词性标注、去除停用词代码

# 代码12-3 分词、词性标注、去除停用词代码import numpy as np# 分词worker = lambda s: [(x.word, x.flag) for x in psg.cut(s)] # 自定义简单分词函数seg_word = content.apply(worker) # 将词语转为数据框形式,一列是词,一列是词语所在的句子ID,最后一列是词语在该句子的位置n_word = seg_word.apply(lambda x: len(x)) # 每一评论中词的个数n_content = [[x+1]*y for x,y in zip(list(seg_word.index), list(n_word))]index_content = sum(n_content, []) # 将嵌套的列表展开,作为词所在评论的idseg_word = sum(seg_word, [])word = [x[0] for x in seg_word] # 词nature = [x[1] for x in seg_word] # 词性content_type = [[x]*y for x,y in zip(list(reviews["content_type"]), list(n_word))]content_type = sum(content_type, []) # 评论类型result = pd.DataFrame({"index_content":index_content, "word":word, "nature":nature, "content_type":content_type}) # 删除标点符号result = result[result["nature"] != "x"] # x表示标点符号# 删除停用词stop_path = open("D:\\360MoveData\\Users\\86130\\Documents\\Tencent Files\\2268756693\\FileRecv\\stoplist.txt", "r",encoding="UTF-8")stop = stop_path.readlines()stop = [x.replace("\n", "") for x in stop]word = list(set(word) - set(stop))result = result[result["word"].isin(word)]# 构造各词在对应评论的位置列n_word = list(result.groupby(by = ["index_content"])["index_content"].count())index_word = [list(np.arange(0, y)) for y in n_word]index_word = sum(index_word, []) # 表示词语在改评论的位置# 合并评论id,评论中词的id,词,词性,评论类型result["index_word"] = index_word代码4:提取含有名词的评论

# 代码12-4 提取含有名词的评论# 提取含有名词类的评论ind = result[["n" in x for x in result["nature"]]]["index_content"].unique()result = result[[x in ind for x in result["index_content"]]]

代码5:绘制词云

# 代码12-5 绘制词云import matplotlib.pyplot as pltfrom wordcloud import WordCloudfrequencies = result.groupby(by = ["word"])["word"].count()frequencies = frequencies.sort_values(ascending = False)backgroud_Image=plt.imread("D:\\360MoveData\\Users\\86130\\Documents\\Tencent Files\\2268756693\\FileRecv\\pl.jpg")wordcloud = WordCloud(font_path="C:\\Windows\\Fonts\\STZHONGS.ttf", max_words=100, background_color="white", mask=backgroud_Image)my_wordcloud = wordcloud.fit_words(frequencies)plt.imshow(my_wordcloud)plt.axis("off")plt.rcParams["font.sans-serif"] = ["SimHei"] # 添加这条可以让图形显示中文plt.title("This is a Title(学号 3110)")plt.show()# 将结果写出result.to_csv("D:\\360MoveData\\Users\\86130\\Documents\\Tencent Files\\2268756693\\FileRecv\\word.csv", index = False, encoding = "utf-8")代码6:匹配情感词

# 代码12-6 匹配情感词import pandas as pdimport numpy as npword = pd.read_csv("D:\\360MoveData\\Users\\86130\\Documents\\Tencent Files\\2268756693\\FileRecv\\word.csv")# 读入正面、负面情感评价词pos_comment = pd.read_csv("D:\\360MoveData\\Users\\86130\\Documents\\Tencent Files\\2268756693\\FileRecv\\正面评价词语(中文).txt", header=None,sep="\n", encoding = "utf-8", engine="python")neg_comment = pd.read_csv("D:\\360MoveData\\Users\\86130\\Documents\\Tencent Files\\2268756693\\FileRecv\\负面评价词语(中文).txt", header=None,sep="\n", encoding = "utf-8", engine="python")pos_emotion = pd.read_csv("D:\\360MoveData\\Users\\86130\\Documents\\Tencent Files\\2268756693\\FileRecv\\正面情感词语(中文).txt", header=None,sep="\n", encoding = "utf-8", engine="python")neg_emotion = pd.read_csv("D:\\360MoveData\\Users\\86130\\Documents\\Tencent Files\\2268756693\\FileRecv\\负面情感词语(中文).txt", header=None,sep="\n", encoding = "utf-8", engine="python") # 合并情感词与评价词positive = set(pos_comment.iloc[:,0])|set(pos_emotion.iloc[:,0])negative = set(neg_comment.iloc[:,0])|set(neg_emotion.iloc[:,0])intersection = positive&negative # 正负面情感词表中相同的词语positive = list(positive - intersection)negative = list(negative - intersection)positive = pd.DataFrame({"word":positive, "weight":[1]*len(positive)})negative = pd.DataFrame({"word":negative, "weight":[-1]*len(negative)}) posneg = positive.append(negative)# 将分词结果与正负面情感词表合并,定位情感词data_posneg = posneg.merge(word, left_on = "word", right_on = "word", how = "right")data_posneg = data_posneg.sort_values(by = ["index_content","index_word"])代码7:修正情感倾向

# 代码12-7 修正情感倾向# 根据情感词前时候有否定词或双层否定词对情感值进行修正# 载入否定词表notdict = pd.read_csv("D:\\360MoveData\\Users\\86130\\Documents\\Tencent Files\\2268756693\\FileRecv\\not.csv")# 处理否定修饰词data_posneg["amend_weight"] = data_posneg["weight"] # 构造新列,作为经过否定词修正后的情感值data_posneg["id"] = np.arange(0, len(data_posneg))only_inclination = data_posneg.dropna() # 只保留有情感值的词语only_inclination.index = np.arange(0, len(only_inclination))index = only_inclination["id"]for i in np.arange(0, len(only_inclination)): review = data_posneg[data_posneg["index_content"] == only_inclination["index_content"][i]] # 提取第i个情感词所在的评论 review.index = np.arange(0, len(review)) affective = only_inclination["index_word"][i] # 第i个情感值在该文档的位置 if affective == 1: ne = sum([i in notdict["term"] for i in review["word"][affective - 1]]) if ne == 1: data_posneg["amend_weight"][index[i]] = -\ data_posneg["weight"][index[i]] elif affective > 1: ne = sum([i in notdict["term"] for i in review["word"][[affective - 1, affective - 2]]]) if ne == 1: data_posneg["amend_weight"][index[i]] = -\ data_posneg["weight"][index[i]] # 更新只保留情感值的数据only_inclination = only_inclination.dropna()# 计算每条评论的情感值emotional_value = only_inclination.groupby(["index_content"], as_index=False)["amend_weight"].sum()# 去除情感值为0的评论emotional_value = emotional_value[emotional_value["amend_weight"] != 0]代码8:查看情感分析效果

# 代码12-8 查看情感分析效果# 给情感值大于0的赋予评论类型(content_type)为pos,小于0的为negemotional_value["a_type"] = ""emotional_value["a_type"][emotional_value["amend_weight"] > 0] = "pos"emotional_value["a_type"][emotional_value["amend_weight"] < 0] = "neg"# 查看情感分析结果result = emotional_value.merge(word, left_on = "index_content", right_on = "index_content", how = "left")result = result[["index_content","content_type", "a_type"]].drop_duplicates() confusion_matrix = pd.crosstab(result["content_type"], result["a_type"], margins=True) # 制作交叉表(confusion_matrix.iat[0,0] + confusion_matrix.iat[1,1])/confusion_matrix.iat[2,2]# 提取正负面评论信息ind_pos = list(emotional_value[emotional_value["a_type"] == "pos"]["index_content"])ind_neg = list(emotional_value[emotional_value["a_type"] == "neg"]["index_content"])posdata = word[[i in ind_pos for i in word["index_content"]]]negdata = word[[i in ind_neg for i in word["index_content"]]]# 绘制词云import matplotlib.pyplot as pltfrom wordcloud import WordCloud# 正面情感词词云freq_pos = posdata.groupby(by = ["word"])["word"].count()freq_pos = freq_pos.sort_values(ascending = False)backgroud_Image=plt.imread("D:\\360MoveData\\Users\\86130\\Documents\\Tencent Files\\2268756693\\FileRecv\\pl.jpg")wordcloud = WordCloud(font_path="C:\\Windows\\Fonts\\STZHONGS.ttf", max_words=100, background_color="white", mask=backgroud_Image)pos_wordcloud = wordcloud.fit_words(freq_pos)plt.imshow(pos_wordcloud)plt.rcParams["font.sans-serif"] = ["SimHei"] # 添加这条可以让图形显示中文plt.title("This is a Title(学号 3110)")plt.axis("off") plt.show()# 负面情感词词云freq_neg = negdata.groupby(by = ["word"])["word"].count()freq_neg = freq_neg.sort_values(ascending = False)neg_wordcloud = wordcloud.fit_words(freq_neg)plt.imshow(neg_wordcloud)plt.rcParams["font.sans-serif"] = ["SimHei"] # 添加这条可以让图形显示中文plt.title("This is a Title(学号 3110)")plt.axis("off") plt.show()# 将结果写出,每条评论作为一行posdata.to_csv("D:\\360MoveData\\Users\\86130\\Documents\\Tencent Files\\2268756693\\FileRecv\\posdata.csv", index = False, encoding = "utf-8")negdata.to_csv("D:\\360MoveData\\Users\\86130\\Documents\\Tencent Files\\2268756693\\FileRecv\\negdata.csv", index = False, encoding = "utf-8")代码9:建立词典及语料库

# 代码12-9 建立词典及语料库import pandas as pdimport numpy as npimport reimport itertoolsimport matplotlib.pyplot as plt# 载入情感分析后的数据posdata = pd.read_csv("D:\\360MoveData\\Users\\86130\\Documents\\Tencent Files\\2268756693\\FileRecv\\posdata.csv", encoding = "utf-8")negdata = pd.read_csv("D:\\360MoveData\\Users\\86130\\Documents\\Tencent Files\\2268756693\\FileRecv\\negdata.csv", encoding = "utf-8")from gensim import corpora, models# 建立词典pos_dict = corpora.Dictionary([[i] for i in posdata["word"]]) # 正面neg_dict = corpora.Dictionary([[i] for i in negdata["word"]]) # 负面# 建立语料库pos_corpus = [pos_dict.doc2bow(j) for j in [[i] for i in posdata["word"]]] # 正面neg_corpus = [neg_dict.doc2bow(j) for j in [[i] for i in negdata["word"]]] # 负面代码10:主题数寻优

# 代码12-10 主题数寻优# 构造主题数寻优函数def cos(vector1, vector2): # 余弦相似度函数 dot_product = 0.0; normA = 0.0; normB = 0.0; for a,b in zip(vector1, vector2): dot_product += a*b normA += a**2 normB += b**2 if normA == 0.0 or normB==0.0: return(None) else: return(dot_product / ((normA*normB)**0.5)) # 主题数寻优def lda_k(x_corpus, x_dict): # 初始化平均余弦相似度 mean_similarity = [] mean_similarity.append(1) # 循环生成主题并计算主题间相似度 for i in np.arange(2,11): lda = models.LdaModel(x_corpus, num_topics = i, id2word = x_dict) # LDA模型训练 for j in np.arange(i): term = lda.show_topics(num_words = 50) # 提取各主题词 top_word = [] for k in np.arange(i): top_word.append(["".join(re.findall(""(.*)"",i)) \ for i in term[k][1].split("+")]) # 列出所有词 # 构造词频向量 word = sum(top_word,[]) # 列出所有的词 unique_word = set(word) # 去除重复的词 # 构造主题词列表,行表示主题号,列表示各主题词 mat = [] for j in np.arange(i): top_w = top_word[j] mat.append(tuple([top_w.count(k) for k in unique_word])) p = list(itertools.permutations(list(np.arange(i)),2)) l = len(p) top_similarity = [0] for w in np.arange(l): vector1 = mat[p[w][0]] vector2 = mat[p[w][1]] top_similarity.append(cos(vector1, vector2)) # 计算平均余弦相似度 mean_similarity.append(sum(top_similarity)/l) return(mean_similarity) # 计算主题平均余弦相似度pos_k = lda_k(pos_corpus, pos_dict)neg_k = lda_k(neg_corpus, neg_dict) # 绘制主题平均余弦相似度图形from matplotlib.font_manager import FontProperties font = FontProperties(size=14)#解决中文显示问题plt.rcParams["font.sans-serif"]=["SimHei"]plt.rcParams["axes.unicode_minus"] = False fig = plt.figure(figsize=(10,8))ax1 = fig.add_subplot(211)ax1.plot(pos_k)ax1.set_xlabel("(学号 3110)正面评论LDA主题数寻优", fontproperties=font)ax2 = fig.add_subplot(212)ax2.plot(neg_k)ax2.set_xlabel("(学号 3110)负面评论LDA主题数寻优", fontproperties=font)代码11:LDA主题分析

# 代码12-11 LDA主题分析# LDA主题分析pos_lda = models.LdaModel(pos_corpus, num_topics = 3, id2word = pos_dict) neg_lda = models.LdaModel(neg_corpus, num_topics = 3, id2word = neg_dict) pos_lda.print_topics(num_words = 10)neg_lda.print_topics(num_words = 10)

[(0, "0.124*"安装" + 0.031*"垃圾" + 0.031*"师傅" + 0.018*"小时" + 0.017*"不好" + 0.017*"打电话" + 0.016*"加热" + 0.015*"烧水" + 0.015*"太慢" + 0.012*"坑人""), (1, "0.033*"太" + 0.031*"差" + 0.027*"安装费" + 0.020*"收" + 0.016*"漏水" + 0.014*"人员" + 0.014*"真的" + 0.013*"坑" + 0.012*"服务" + 0.012*"高""), (2, "0.030*"售后" + 0.021*"东西" + 0.020*"客服" + 0.019*"装" + 0.017*"收费" + 0.017*"贵" + 0.016*"慢" + 0.011*"产品" + 0.011*"上门" + 0.010*"评"")]

关键词:

-

视焦点讯!Python数据分析第七周作业随笔记录

电商产品评论数据情感分析代码1:评论去重的代码 代码12-1评论去重的代码importpandasaspdimportreimportjieba possegaspsgi

来源: -

焦点速读:python文件操作:r、w、a、r+、w+、a+和b模式

对文件操作的基本步骤f=open(& 39;a txt& 39;,& 39;r& 39;,encoding=& 39;utf-8& 39;)data=f read()print(data)f

来源: -

-

视焦点讯!Python数据分析第七周作业随笔记录

焦点速读:python文件操作:r、w、a、r+、w+、a+和b模式

小皮1-click漏洞的代码审计学习笔记

天天亮点!百余名驻澳门部队官兵无偿献血

发射6枚火箭后 马斯克SpaceX的劲敌维珍轨道倒了:已申请破产

健康低脂 鲜嫩多汁:肌肉小王子即食鸡胸肉10袋19.9元

iPhone自带天气应用崩了 苹果客服:没收到反馈 重启或升级试试

每日精选:力压美国!全球AI论文发表量前十机构:九所来自中国

焦点热门:电视剧《他是谁》收官!聂宝华下线了

每日信息:人民日报发声后!中央政法委将严查!刘国梁危险

当前动态:Apache DB Utils教程_编程入门自学教程_菜鸟教程-免费教程分享

搭一下 Stable Diffusion WebUI

世界观热点:杭州小伙高速开特斯拉 “自动驾驶”变“自动撞车”

全球热消息:GPT-4学会“自我反思”:测试表现提升达30%

世界视讯!酷睿独享大小核架构 至强CPU不会混搭:Intel解释原因

电视画质新高度 乐视发布85寸新品“让影像狂飙”

北京环球影城回应不让摄影师进:不允许商业旅拍 个人可以

每日热文:市应急管理局开展地震监测台站巡查工作

【速看料】JavaScript:数组的sort()排序(遇到负数时如何处理)

游戏内存不能为read是什么原因?游戏内存不能为read的解决方法

魅族MX3上市时间和价格是多少?魅族mx3参数配置

hynix内存条是什么牌子?hynix内存条参数怎么看?

ibooks store不可用是怎么回事?ibooks store不可用怎么解决?

天龙八部3怎么把画面调小?天龙八部3装备评分排行榜

揭秘电诈手段|打开“屏幕共享”,存款不翼而飞

最全.NET Core 、.NET 5、.NET 6和.NET 7简介和区别

数学建模(三):模拟退火算法(SA)

今日要闻!Advanced Installer傻瓜式打包教程

环球快报:“蜀道电行者”打好森林防火“组合拳”

快资讯:日媒:后锂离子电池时代竞争 中国碾压式领先日本、美国

全球今日讯!279元大额券:杰士邦零感003系列18枚30.9元狂促

每日报道:5.75亿超《你的名字》!《铃芽之旅》成中国影史日本动画票房第一

环球快看点丨安徽淮北9级大风:女子睡醒发现房顶被吹走 网友羡慕睡眠质量

知名演员王刚清空社交账号 本人回应:没兴趣没精力经营

热消息:陕西发布清明出行预测:公路基本畅通运行,高速车流量是平日1.3倍

JAVA多线程并发编程-避坑指南

今日热闻!安装MYSQL_5.0/8.0教程(附数据库和客户端工具下载链接)

今日热议:易基因: oxRRBS+RRBS揭示炎症性肠病导致发育异常的表观遗传机制|甲基化研究

今日聚焦!导演陆川:AI 15秒生成的海报 比专业公司一个月做得还好

318川藏线突现雪崩 行车记录仪拍下惊险一幕

世界微资讯!5500元 一图读懂特斯拉补能神器CyberVault赛博充:专为中国用户定制

信息:“喝酒吃药”卷土重来!公募基金重仓股TOP50中贵州茅台仍在榜首,还有17只票被增持(附表)

【报资讯】南漳:油菜花开春意浓

世界观察:揭秘你不知道的“寒食节”:春秋时期延续至今 要吃青团、凉面

车评人批丰田埃尔法不装后防撞梁:一个倒车碰撞车体就变形

天天热资讯!消灭刘海挖孔!曝iPhone 17 Pro将是首款真全面屏苹果手机

全球信息:5A级薄荷抗菌 凉感冰丝太爽:卡帝乐鳄鱼夏季平角裤7.3元/条发车

观焦点:标准版终于要上高刷了!iPhone或2025年全系列引入LTPO技术

天天热讯:扫叶林风后,拾薪山雨前

day35 860. 柠檬水找零

当前快播:全网最详细中英文ChatGPT-GPT-4示例文档-智能AI辅助写作从0到1快速入门——官网推荐的48种最佳应用场景(附python/node.js/

环球微速讯:四月份去贵州旅游好吗_四月份去贵州旅游

戴苹果手表致手腕红肿你遇见没?苹果客服回应:或与皮肤敏感有关

全球微资讯!《拿破仑》年内公映

世界热消息:北汽越野BJ90狂降71万:打2.8折提换壳奔驰GLS

天天观天下!就是玩!马斯克将推特图标换成柴犬头像:还发了一幅漫画

每日讯息!村民回应网传奥迪轿车被当祭品焚烧:确实是意外

前沿热点:美克生能源携手新凤鸣集团 打造5MWh用户侧储能电站

天天关注:男生1900元买iPhone 14 Pro Max开机竟是安卓系统:商家被平台封禁

世界速看:铠侠成功研制出HLC闪存!SSD容量暴增:速度/耐用性比QLC还拉跨

环球观热点:2999元起 魅族20系列首销一秒破亿!京东、天猫多平台销冠

世界即时看!ChatGPT出现大规模封号; 我国移动网络IPv6流量首次突破50%;菜鸟首个航空运货中心落户深圳|Do早报

观焦点:Centos 分割卷组

焦点消息!中国恒大:公司与债权人特别小组成员签订三份重组支持协议

三利好助A股四月开门红 “小阳春”行情值得期待

每日消息!交易拥挤度创23年纪录 TMT板块还能热多久

要闻:国际金融市场早知道:4月4日

环球快资讯丨大家都越骂越买?iPhone 13成全球最畅销手机 小米为安卓挽尊

每日消息!国内将上映!真人电影《小美人鱼》最新预告 这美人鱼网友看完感叹

今日热闻!AMD Zen4加持!ROG首款掌机来了:比Switch强大太多

读SQL进阶教程笔记07_EXISTS谓词

gw文件用什么软件打开手机上_gw文件用什么软件打开

环球快播:《王者荣耀》杨玉环新皮肤官宣:时尚芭莎指导 春天的神女

环球速讯:外卖超20元才送?江苏省消保委:停止被迫“凑单”

前沿热点:LCD电视跌成白菜价 面板一哥京东方去年利润下滑71%

腰粗大树刮断砸中小轿车 女车主:不慌 先拍个视频

【当前独家】网传青海现野生大熊猫!专家:绝对不可能

【聚看点】c语言分解质因数_分解质因数的概念

全球要闻:网红书店言几又被强制执行6400万

centos 8 上sql server 安装

【全球热闻】HP发布暗影精灵9系列游戏本:RTX 4080干到13999元

【新要闻】1199元!海康Mage20 Pro家庭存储NAS发布:支持最大40TB容量

每日看点!对线!枪迷称落后22分曼联追分无望,内维尔怼阿森纳前15年战绩差

天天最资讯丨Flask

世界新消息丨Autoconfiguration详解——自动注入配置参数

当前速看:1080. 根到叶路径上的不足节点

【环球速看料】上下五千年 熊猫第一次有了站姐

环球滚动:菜鸟首个航空货运中心落户深圳:包裹效率提升30%

世界焦点!计算机系统的组成(1硬件系统篇)

昆自股份拟通过拍卖方式购买14套房产作为公司经营活动场所 评估价值为3516.56万

引入人工智能!米哈游刘伟:《崩坏:星穹铁道》将在NPC加入AI

终结者来临?让开发者都畏惧的AI真的对人类有威胁吗

【独家焦点】重回1元/包:中石化出品原生竹浆90抽*3层抽纸大促

观天下!11.98万起 广汽埃安爆款2023上新:48小时订单破万

我国民营火箭公司天兵科技将研发空天飞机:载100人全球任意地点往返

债市日报:4月3日

天天快报!上古卷轴五强制随从代码_上古卷轴5强制随从代码

天天消息!夜宿海底捞引争议!究竟谁在留宿?多数都为旅游的年轻人

当前资讯!00后男生坚持做副业月入万元:父母能力有限 只能靠自己

即时焦点:等等党赢了!全球车企正迈向产量过剩:价格战或卷土重来