最新要闻

- 每日消息!性能超越电竞手机!Redmi K60 Pro综合跑分达135万

- 信息:千万别强忍 20岁小伙憋气压抑咳嗽导致昏厥

- 特斯拉今年股价累计暴跌超60%!马斯克透露大跌原因

- 收购动视暴雪遇阻 微软哭弱:根本打不过索尼、任天堂

- 到手9袋!良品铺子坚果礼盒1440 仅44元包邮

- 户外运动有哪些项目?户外运动品牌排行榜

- 什么鱼营养价值最高?什么鱼只会逆流而上?

- 金木水火土命怎么算出来的?金木水火土哪个腿长?

- 玉面小飞龙是什么意思?玉面小飞龙出自哪里?

- Redmi K60系列上架:三颗口碑最好的芯片都拿到了 12月27日发

- 每日聚焦:最快闪充旗舰!真我GT Neo5充电头曝光:支持240W充电

- 环球热讯:紫米裁员80%并入小米?官方澄清:ZMI品牌将继续存在

- 全球新资讯:9.99万元遭疯抢 五菱宏光MINI EV敞篷版下线:能跑280km

- 苯胺皮是什么皮?苯胺皮和纳帕皮有什么区别?

- 焦点速讯:字节鏖战美团的关键一役

- 重点聚焦!糗事百科宣布将关闭服务 自侃“享年17岁”

手机

iphone11大小尺寸是多少?苹果iPhone11和iPhone13的区别是什么?

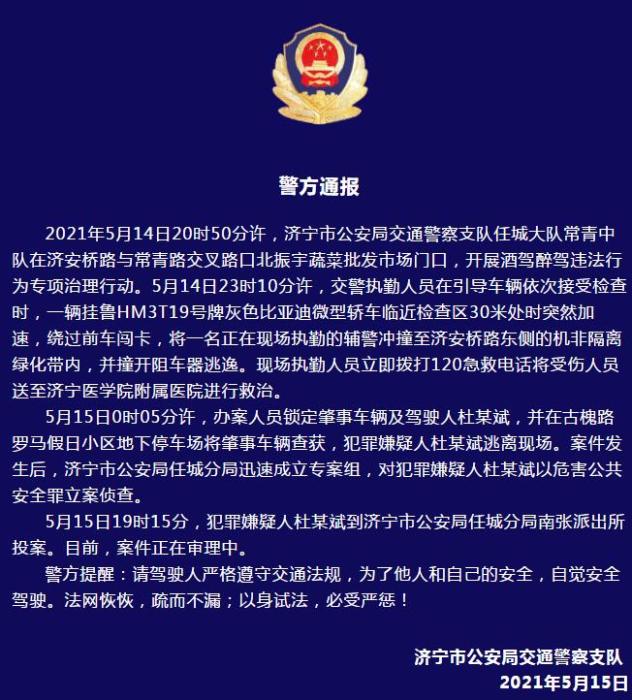

警方通报辅警执法直播中被撞飞:犯罪嫌疑人已投案

- iphone11大小尺寸是多少?苹果iPhone11和iPhone13的区别是什么?

- 警方通报辅警执法直播中被撞飞:犯罪嫌疑人已投案

- 男子被关545天申国赔:获赔18万多 驳回精神抚慰金

- 3天内26名本土感染者,辽宁确诊人数已超安徽

- 广西柳州一男子因纠纷杀害三人后自首

- 洱海坠机4名机组人员被批准为烈士 数千干部群众悼念

家电

当前速读:机器学习——果蔬分类

一、选题的背景

(资料图片仅供参考)

(资料图片仅供参考)

为了实现对水果和蔬菜的分类识别,收集了香蕉、苹果、梨、葡萄、橙子、猕猴桃、西瓜、石榴、菠萝、芒果、黄瓜、胡萝卜、辣椒、洋葱、马铃薯、柠檬、番茄、萝卜、甜菜根、卷心菜、生菜、菠菜、大豆、花椰菜、甜椒、辣椒、萝卜、玉米、甜玉米、红薯、辣椒粉、生姜、大蒜、豌豆、茄子共36种果蔬的图像。该项目使用resnet18网络进行分类。

二、机器学习案例设计方案

1.本选题采用的机器学习案例(训练集与测试集)的来源描述

数据集来自百度AI studio平台(https://aistudio.baidu.com/aistudio/datasetdetail/119023/0),共包含36种果蔬,每一个类别包括100张训练图像,10张测试图像和10张验证图像。

2 采用的机器学习框架描述

本次使用的网络框架,主要用到了二维卷积、激活函数、最大池化、Dropout和全连接,下面将对搭建的网络模型进行解释。

首先是一个二维卷积层,输入通道数为3,输出通道数为100,卷积核大小是3*3,填充大小是1*1。输入通道数为3是因为这个是第一层卷积,输入的是RGB图像,具有三个通道,输出通道数量可以根据实际情况自定。填充是因为希望在卷积后,不要改变图像的尺寸。

在卷积层之后是一个RELU激活函数,如果不用激活函数,在这种情况下每一层输出都是上层输入的线性函数。容易验证,无论神经网络有多少层,输出都是输入的线性组合,与没有隐藏层效果相当。因此引入非线性函数作为激活函数,这样深层神经网络就有意义了(不再是输入的线性组合,可以逼近任意函数)。最早的想法是sigmoid函数或者tanh函数,输出有界,很容易充当下一层输入。

引入RELU激活函数有以下三个原因:

第一,采用sigmoid等函数,算激活函数时(指数运算),计算量大,反向传播求误差梯度时,求导涉及除法,计算量相对大,而采用Relu激活函数,整个过程的计算量节省很多。

第二,对于深层网络,sigmoid函数反向传播时,很容易就会出现梯度消失的情况(在sigmoid接近饱和区时,变换太缓慢,导数趋于0,这种情况会造成信息丢失),从而无法完成深层网络的训练。

第三,ReLu会使一部分神经元的输出为0,这样就造成了网络的稀疏性,并且减少了参数的相互依存关系,缓解了过拟合问题的发生。

然后再跟一个二维卷积层,输入通道数应该和上一层卷积的输出通道数相同,所以设为100, 输出通道数同样根据实际情况设定,此处设为150,其他参数与第一层卷积相同。

后续每一个卷积层和全连接层后面都会跟一个RELU激活函数,所以后面不再叙述RELU激活函数层。

再之后添加一个2*2的最大池化层,该层用来缩减模型的大小,提高计算速度,同时提高所提取特征的鲁棒性。

再经过三次卷积后,使用Flatten将二维Tensor拉平,变为一维Tensor,然后使用全连接层,通过多个全连接层后,使用dropout层随机删除一些结点,该方法可以有效的避免网络过拟合,在最后一个全连接层的输出对应需要分类的个数。

3.涉及到的技术难点与解决思路

下载的数据集没有划分训练集、测试集和验证集,需要自己写代码完成划分。在刚开始写代码的时候对于文件路径没有搞清楚,没有弄懂os.path.join方法如何使用,导致总是读取不到图像,并且代码还没有报错误正常运行结束,但是查看划分后的文件夹里没有数据。通过debug发现文件的路径出现问题,具体是windows下的/和\混用,导致不能正确的对路径进行处理。在排除问题后统一使用\\,最终问题得到解决。

三、机器学习的实现步骤

(1)划分数据集并进行缩放

1 import os 2 import glob 3 import random 4 import shutil 5 from PIL import Image 6 #对所有图片进行RGB转化,并且统一调整到一致大小,但不让图片发生变形或扭曲,划分了训练集和测试集 7 8 if __name__ == "__main__": 9 test_split_ratio = 0.05 #百分之五的比例作为测试集10 desired_size = 128 # 图片缩放后的统一大小11 raw_path = "./raw"12 13 #把多少个类别算出来,包括目录也包括文件14 dirs = glob.glob(os.path.join(raw_path, "*"))15 #进行过滤,只保留目录,一共36个类别16 dirs = [d for d in dirs if os.path.isdir(d)]17 18 print(f"Totally {len(dirs)} classes: {dirs}")19 20 for path in dirs:21 # 对每个类别单独处理22 23 #只保留类别名称24 path = path.split("/")[-1]25 print(path)26 #创建文件夹27 os.makedirs(f"train/{path}", exist_ok=True)28 os.makedirs(f"test/{path}", exist_ok=True)29 30 #原始文件夹当前类别的图片进行匹配31 files = glob.glob(os.path.join( path, "*.jpg"))32 # print(raw_path, path)33 34 files += glob.glob(os.path.join( path, "*.JPG"))35 files += glob.glob(os.path.join( path, "*.png"))36 37 random.shuffle(files)#原地shuffle,因为要取出来验证集38 39 boundary = int(len(files)*test_split_ratio) # 训练集和测试集的边界40 41 for i, file in enumerate(files):42 img = Image.open(file).convert("RGB")43 44 old_size = img.size 45 46 ratio = float(desired_size)/max(old_size)47 48 new_size = tuple([int(x*ratio) for x in old_size])#等比例缩放49 50 im = img.resize(new_size, Image.ANTIALIAS)#后面的方法不会造成模糊51 52 new_im = Image.new("RGB", (desired_size, desired_size))53 54 #new_im在某个尺寸上更大,我们将旧图片贴到上面55 new_im.paste(im, ((desired_size-new_size[0])//2,56 (desired_size-new_size[1])//2))57 58 assert new_im.mode == "RGB"59 60 if i <= boundary:61 new_im.save(os.path.join(f"test/{path}", file.split("\\")[-1].split(".")[0]+".jpg"))62 else:63 new_im.save(os.path.join(f"train/{path}", file.split("\\")[-1].split(".")[0]+".jpg"))64 65 test_files = glob.glob(os.path.join("test", "*", "*.jpg"))66 train_files = glob.glob(os.path.join("train", "*", "*.jpg"))67 68 print(f"Totally {len(train_files)} files for training")69 print(f"Totally {len(test_files)} files for test")(2)图像预处理

包括随即旋转、随机翻转、裁剪等,并进行归一化。

1 #图像预处理 2 train_dir = "./train" 3 val_dir = "./test" 4 test_dir = "./test" 5 classes0 = os.listdir(train_dir) 6 classes=sorted(classes0) 7 print(classes) 8 train_transform=transforms.Compose([ 9 transforms.RandomRotation(10), # 旋转+/-10度10 transforms.RandomHorizontalFlip(), # 反转50%的图像11 transforms.Resize(40), # 调整最短边的大小12 transforms.CenterCrop(40), # 作物最长边13 transforms.ToTensor(),14 transforms.Normalize([0.485, 0.456, 0.406],15 [0.229, 0.224, 0.225])16 ])

1 #显示图像2 def show_image(img,label):3 print("Label: ", trainset.classes[label], "("+str(label)+")")4 plt.imshow(img.permute(1,2,0))5 plt.show()6 7 show_image(*trainset[10])8 show_image(*trainset[20])(3)读取数据

1 batch_size = 642 train_loader = DataLoader(train_ds, batch_size, shuffle=True, num_workers=4, pin_memory=True)3 val_loader = DataLoader(val_ds, batch_size*2, num_workers=4, pin_memory=True)4 test_loader = DataLoader(test_ds, batch_size*2, num_workers=4, pin_memory=True)

(4)构建CNN模型

#构建CNN模型

1 #构建CNN模型 2 class CnnModel(ImageClassificationBase): 3 def __init__(self): 4 super().__init__() 5 #cnn提取特征 6 self.network = nn.Sequential( 7 nn.Conv2d(3, 100, kernel_size=3, padding=1),#Conv2D层 8 nn.ReLU(), 9 nn.Conv2d(100, 150, kernel_size=3, stride=1, padding=1),10 nn.ReLU(),11 nn.MaxPool2d(2, 2), #池化层12 13 nn.Conv2d(150, 200, kernel_size=3, stride=1, padding=1),14 nn.ReLU(),15 nn.Conv2d(200, 200, kernel_size=3, stride=1, padding=1),16 nn.ReLU(),17 nn.MaxPool2d(2, 2), 18 19 nn.Conv2d(200, 250, kernel_size=3, stride=1, padding=1),20 nn.ReLU(),21 nn.Conv2d(250, 250, kernel_size=3, stride=1, padding=1),22 nn.ReLU(),23 nn.MaxPool2d(2, 2), 24 25 #全连接26 nn.Flatten(), 27 nn.Linear(6250, 256), 28 nn.ReLU(), 29 nn.Linear(256, 128), 30 nn.ReLU(), 31 nn.Linear(128, 64), 32 nn.ReLU(),33 nn.Linear(64, 32),34 nn.ReLU(),35 nn.Dropout(0.25),36 nn.Linear(32, len(classes)))37 38 def forward(self, xb):39 return self.network(xb)

(5)训练网络

#训练网络

1 #训练网络 2 @torch.no_grad() 3 def evaluate(model, val_loader): 4 model.eval() 5 outputs = [model.validation_step(batch) for batch in val_loader] 6 return model.validation_epoch_end(outputs) 7 8 def fit(epochs, lr, model, train_loader, val_loader, opt_func=torch.optim.SGD): 9 history = []10 optimizer = opt_func(model.parameters(), lr)11 for epoch in range(epochs):12 # 训练阶段13 model.train()14 train_losses = []15 for batch in tqdm(train_loader,disable=True):16 loss = model.training_step(batch)17 train_losses.append(loss)18 loss.backward()19 optimizer.step()20 optimizer.zero_grad()21 # 验证阶段22 result = evaluate(model, val_loader)23 result["train_loss"] = torch.stack(train_losses).mean().item()24 model.epoch_end(epoch, result)25 history.append(result)26 return history27 28 model = to_device(CnnModel(), device)29 30 history=[evaluate(model, val_loader)]31 32 num_epochs = 10033 opt_func = torch.optim.Adam34 lr = 0.00135 36 history+= fit(num_epochs, lr, model, train_dl, val_dl, opt_func)

(6)绘制损失函数和准确率图

1 def plot_accuracies(history): 2 accuracies = [x["val_acc"] for x in history] 3 plt.plot(accuracies, "-x") 4 plt.xlabel("epoch") 5 plt.ylabel("accuracy") 6 plt.title("Accuracy vs. No. of epochs") 7 plt.show() 8 9 def plot_losses(history):10 train_losses = [x.get("train_loss") for x in history]11 val_losses = [x["val_loss"] for x in history]12 plt.plot(train_losses, "-bx")13 plt.plot(val_losses, "-rx")14 plt.xlabel("epoch")15 plt.ylabel("loss")16 plt.legend(["Training", "Validation"])17 plt.title("Loss vs. No. of epochs")18 plt.show()19 20 plot_accuracies(history)21 plot_losses(history)22 23 evaluate(model, test_loader)(7)预测

1 #预测分类 2 y_true=[] 3 y_pred=[] 4 with torch.no_grad(): 5 for test_data in test_loader: 6 test_images, test_labels = test_data[0].to(device), test_data[1].to(device) 7 pred = model(test_images).argmax(dim=1) 8 for i in range(len(pred)): 9 y_true.append(test_labels[i].item())10 y_pred.append(pred[i].item())11 12 from sklearn.metrics import classification_report13 print(classification_report(y_true,y_pred,target_names=classes,digits=4))

(8)读取图片测试

1 import numpy as np 2 from PIL import Image 3 import matplotlib.pyplot as plt 4 import torchvision.transforms as transforms 5 6 def predict(img_path): 7 img = Image.open(img_path) 8 plt.imshow(img) 9 plt.show()10 img = img.resize((32,32))11 img = transforms.ToTensor()(img)12 img = img.unsqueeze(0)13 img = img.to(device)14 pred = model(img).argmax(dim=1)15 print("预测结果为:",classes[pred.item()])16 return classes[pred.item()]17 18 predict("./raw/apple/Image_1.jpg")四、总结

在本次课程设计中,使用深度学习的方法实现了果蔬的36分类,相对来说分类数量是比较多的,在训练了100个epoch以后,分类的准确率可以达到74.3%。通过对果蔬的分类,我明白了当训练集的图像数量较少时,可以采用数据增强对原始图像进行处理,获得更多的数据来增强网络的泛化能力,避免网络过拟合。数据增强的方法一般有随机翻转、随即旋转、随即裁剪、明暗变化、高斯噪声、椒盐噪声等。除此之外,对整个深度学习中图像分类的流程也有了一定的了解,从收集数据、对数据进行预处理、自己构建网络模型、训练网络到最后的预测结果,加深了对图像分类过程的理解。希望在以后的学习中,可以学习更多深度学习的方法和应用。

五、全部代码

1 import os 2 import glob 3 import random 4 import shutil 5 from PIL import Image 6 #对所有图片进行RGB转化,并且统一调整到一致大小,但不让图片发生变形或扭曲,划分了训练集和测试集 7 8 if __name__ == "__main__": 9 test_split_ratio = 0.05 #百分之五的比例作为测试集 10 desired_size = 128 # 图片缩放后的统一大小 11 raw_path = "./raw" 12 13 #把多少个类别算出来,包括目录也包括文件 14 dirs = glob.glob(os.path.join(raw_path, "*")) 15 #进行过滤,只保留目录,一共36个类别 16 dirs = [d for d in dirs if os.path.isdir(d)] 17 18 print(f"Totally {len(dirs)} classes: {dirs}") 19 20 for path in dirs: 21 # 对每个类别单独处理 22 23 #只保留类别名称 24 path = path.split("/")[-1] 25 print(path) 26 #创建文件夹 27 os.makedirs(f"train/{path}", exist_ok=True) 28 os.makedirs(f"test/{path}", exist_ok=True) 29 30 #原始文件夹当前类别的图片进行匹配 31 files = glob.glob(os.path.join(raw_path, path, "*.jpg")) 32 # print(raw_path, path) 33 34 files += glob.glob(os.path.join(raw_path, path, "*.JPG")) 35 files += glob.glob(os.path.join(raw_path, path, "*.png")) 36 37 random.shuffle(files)#原地shuffle,因为要取出来验证集 38 39 boundary = int(len(files)*test_split_ratio) # 训练集和测试集的边界 40 41 for i, file in enumerate(files): 42 img = Image.open(file).convert("RGB") 43 44 old_size = img.size 45 46 ratio = float(desired_size)/max(old_size) 47 48 new_size = tuple([int(x*ratio) for x in old_size])#等比例缩放 49 50 im = img.resize(new_size, Image.ANTIALIAS)#后面的方法不会造成模糊 51 52 new_im = Image.new("RGB", (desired_size, desired_size)) 53 54 #new_im在某个尺寸上更大,我们将旧图片贴到上面 55 new_im.paste(im, ((desired_size-new_size[0])//2, 56 (desired_size-new_size[1])//2)) 57 58 assert new_im.mode == "RGB" 59 60 if i <= boundary: 61 new_im.save(os.path.join(f"test/{path}", file.split("/")[-1].split(".")[0]+".jpg")) 62 else: 63 new_im.save(os.path.join(f"train/{path}", file.split("/")[-1].split(".")[0]+".jpg")) 64 65 test_files = glob.glob(os.path.join("test", "*", "*.jpg")) 66 train_files = glob.glob(os.path.join("train", "*", "*.jpg")) 67 68 69 print(f"Totally {len(train_files)} files for training") 70 print(f"Totally {len(test_files)} files for test") 71 72 73 import os 74 import random 75 import numpy as np 76 import pandas as pd 77 import torch 78 import torch.nn as nn 79 import torch.nn.functional as F 80 from tqdm.notebook import tqdm 81 from torchvision import datasets, transforms, models 82 from torchvision.datasets import ImageFolder 83 from torchvision.transforms import ToTensor 84 from torchvision.utils import make_grid 85 from torch.utils.data import random_split 86 from torch.utils.data.dataloader import DataLoader 87 import matplotlib.pyplot as plt 88 89 if __name__ == "__main__": 90 # 使用第2个GPU 91 os.environ["CUDA_VISIBLE_DEVICES"] = "1" 92 93 #图像预处理 94 train_dir = "./train" 95 val_dir = "./test" 96 test_dir = "./test" 97 classes0 = os.listdir(train_dir) 98 classes=sorted(classes0) 99 # print(classes)100 train_transform=transforms.Compose([101 transforms.RandomRotation(10), # 旋转+/-10度102 transforms.RandomHorizontalFlip(), # 反转50%的图像103 transforms.Resize(40), # 调整最短边的大小104 transforms.CenterCrop(40), # 作物最长边105 transforms.ToTensor(),106 transforms.Normalize([0.485, 0.456, 0.406],107 [0.229, 0.224, 0.225])108 ])109 110 trainset = ImageFolder(train_dir, transform=train_transform)111 valset = ImageFolder(val_dir, transform=train_transform)112 testset = ImageFolder(test_dir, transform=train_transform)113 # print(len(trainset))114 115 #查看数据集的一个图像形状116 img, label = trainset[10]117 # print(img.shape)118 119 #显示图像120 def show_image(img,label):121 print("Label: ", trainset.classes[label], "("+str(label)+")")122 plt.imshow(img.permute(1,2,0))123 plt.show()124 125 # show_image(*trainset[10])126 # show_image(*trainset[20])127 128 torch.manual_seed(10)129 train_size = len(trainset)130 val_size = len(valset)131 test_size = len(testset)132 133 train_ds=trainset134 val_ds=valset135 test_ds=testset136 len(train_ds), len(val_ds), len(test_ds) 137 138 #读取数据139 batch_size = 64140 train_loader = DataLoader(train_ds, batch_size, shuffle=True, num_workers=4, pin_memory=True)141 val_loader = DataLoader(val_ds, batch_size*2, num_workers=4, pin_memory=True)142 test_loader = DataLoader(test_ds, batch_size*2, num_workers=4, pin_memory=True)143 144 145 if __name__ == "__main__":146 for images, labels in train_loader:147 fig, ax = plt.subplots(figsize=(18,10))148 ax.set_xticks([])149 ax.set_yticks([])150 ax.imshow(make_grid(images,nrow=16).permute(1,2,0))151 break152 153 154 155 torch.cuda.is_available()156 157 158 #选择GPU或CPU159 def get_default_device():160 if torch.cuda.is_available():161 return torch.device("cuda")162 else:163 return torch.device("cpu")164 165 #移动到所选的设备 166 def to_device(data, device):167 if isinstance(data, (list,tuple)):168 return [to_device(x, device) for x in data]169 return data.to(device, non_blocking=True)170 171 class DeviceDataLoader():172 #包装数据加载器以将数据移动到设备173 def __init__(self, dl, device):174 self.dl = dl175 self.device = device176 177 def __iter__(self):178 #将数据移动到设备后生成一批数据179 for b in self.dl: 180 yield to_device(b, self.device)181 182 def __len__(self):183 #分批次184 return len(self.dl)185 186 device = get_default_device()187 188 189 train_loader = DeviceDataLoader(train_loader, device)190 val_loader = DeviceDataLoader(val_loader, device)191 test_loader = DeviceDataLoader(test_loader, device)192 193 input_size = 3*40*40194 output_size = 3195 196 197 198 def accuracy(outputs, labels):199 _, preds = torch.max(outputs, dim=1)200 return torch.tensor(torch.sum(preds == labels).item() / len(preds))201 202 #图像分类203 class ImageClassificationBase(nn.Module):204 def training_step(self, batch):205 images, labels = batch 206 out = self(images) # 生成预测207 loss = F.cross_entropy(out, labels) # 计算损失208 return loss209 210 def validation_step(self, batch):211 images, labels = batch 212 out = self(images) # 生成预测213 loss = F.cross_entropy(out, labels) # 计算损失214 acc = accuracy(out, labels) # 计算精度215 return {"val_loss": loss.detach(), "val_acc": acc}216 217 def validation_epoch_end(self, outputs):218 batch_losses = [x["val_loss"] for x in outputs]219 epoch_loss = torch.stack(batch_losses).mean() # 合并损失220 batch_accs = [x["val_acc"] for x in outputs]221 epoch_acc = torch.stack(batch_accs).mean() # 结合精度222 return {"val_loss": epoch_loss.item(), "val_acc": epoch_acc.item()}223 224 def epoch_end(self, epoch, result):225 print("Epoch [{}], train_loss: {:.4f}, val_loss: {:.4f}, val_acc: {:.4f}".format(226 epoch, result["train_loss"], result["val_loss"], result["val_acc"]))227 228 #构建CNN模型229 class CnnModel(ImageClassificationBase):230 def __init__(self):231 super().__init__()232 #cnn提取特征233 self.network = nn.Sequential(234 nn.Conv2d(3, 100, kernel_size=3, padding=1),#Conv2D层235 nn.ReLU(),236 nn.Conv2d(100, 150, kernel_size=3, stride=1, padding=1),237 nn.ReLU(),238 nn.MaxPool2d(2, 2), #池化层239 240 nn.Conv2d(150, 200, kernel_size=3, stride=1, padding=1),241 nn.ReLU(),242 nn.Conv2d(200, 200, kernel_size=3, stride=1, padding=1),243 nn.ReLU(),244 nn.MaxPool2d(2, 2), 245 246 nn.Conv2d(200, 250, kernel_size=3, stride=1, padding=1),247 nn.ReLU(),248 nn.Conv2d(250, 250, kernel_size=3, stride=1, padding=1),249 nn.ReLU(),250 nn.MaxPool2d(2, 2), 251 252 #全连接253 nn.Flatten(), 254 nn.Linear(6250, 256), 255 nn.ReLU(), 256 nn.Linear(256, 128), 257 nn.ReLU(), 258 nn.Linear(128, 64), 259 nn.ReLU(),260 nn.Linear(64, 32),261 nn.ReLU(),262 nn.Dropout(0.25),263 nn.Linear(32, len(classes)))264 265 def forward(self, xb):266 return self.network(xb)267 268 # 将模型加载到GPU上去269 model = CnnModel()270 271 # model.cuda()272 273 if __name__ == "__main__":274 for images, labels in train_loader:275 out = model(images)276 print("images.shape:", images.shape) 277 print("out.shape:", out.shape)278 print("out[0]:", out[0])279 break280 281 device = get_default_device()282 283 train_dl = DeviceDataLoader(train_loader, device)284 val_dl = DeviceDataLoader(val_loader, device)285 test_dl = DeviceDataLoader(test_loader, device)286 to_device(model, device)287 288 289 #训练网络290 def evaluate(model, val_loader):291 model.eval()292 outputs = [model.validation_step(batch) for batch in val_loader]293 return model.validation_epoch_end(outputs)294 295 def fit(epochs, lr, model, train_loader, val_loader, opt_func=torch.optim.SGD):296 history = []297 optimizer = opt_func(model.parameters(), lr)298 for epoch in range(epochs):299 # 训练阶段300 model.train()301 train_losses = []302 for batch in tqdm(train_loader,disable=True):303 loss = model.training_step(batch)304 train_losses.append(loss)305 loss.backward()306 optimizer.step()307 optimizer.zero_grad()308 # 验证阶段309 result = evaluate(model, val_loader)310 result["train_loss"] = torch.stack(train_losses).mean().item()311 model.epoch_end(epoch, result)312 history.append(result)313 return history314 315 model = to_device(CnnModel(), device)316 317 318 history=[evaluate(model, val_loader)]319 num_epochs = 5320 opt_func = torch.optim.Adam321 lr = 0.001322 323 history+= fit(num_epochs, lr, model, train_dl, val_dl, opt_func)324 325 326 # # 绘制损失函数和准确率图327 328 def plot_accuracies(history):329 accuracies = [x["val_acc"] for x in history]330 plt.plot(accuracies, "-x")331 plt.xlabel("epoch")332 plt.ylabel("accuracy")333 plt.title("Accuracy vs. No. of epochs")334 plt.show()335 336 def plot_losses(history):337 train_losses = [x.get("train_loss") for x in history]338 val_losses = [x["val_loss"] for x in history]339 plt.plot(train_losses, "-bx")340 plt.plot(val_losses, "-rx")341 plt.xlabel("epoch")342 plt.ylabel("loss")343 plt.legend(["Training", "Validation"])344 plt.title("Loss vs. No. of epochs")345 plt.show()346 347 plot_accuracies(history)348 plot_losses(history)349 350 evaluate(model, test_loader)351 352 353 #预测分类354 y_true=[]355 y_pred=[]356 with torch.no_grad():357 for test_data in test_loader:358 test_images, test_labels = test_data[0].to(device), test_data[1].to(device)359 pred = model(test_images).argmax(dim=1)360 for i in range(len(pred)):361 y_true.append(test_labels[i].item())362 y_pred.append(pred[i].item())363 364 from sklearn.metrics import classification_report365 print(classification_report(y_true,y_pred,target_names=classes,digits=4))366 367 # 读取图片进行预测368 import numpy as np369 from PIL import Image370 import matplotlib.pyplot as plt371 import torchvision.transforms as transforms372 373 def predict(img_path):374 img = Image.open(img_path)375 plt.imshow(img)376 plt.show()377 img = img.resize((32,32))378 img = transforms.ToTensor()(img)379 img = img.unsqueeze(0)380 img = img.to(device)381 pred = model(img).argmax(dim=1)382 print("预测结果为:",classes[pred.item()])383 return classes[pred.item()]384 385 predict("./raw/apple/Image_1.jpg")

-

-

-

-

当前速读:机器学习——果蔬分类

每日消息!性能超越电竞手机!Redmi K60 Pro综合跑分达135万

信息:千万别强忍 20岁小伙憋气压抑咳嗽导致昏厥

特斯拉今年股价累计暴跌超60%!马斯克透露大跌原因

收购动视暴雪遇阻 微软哭弱:根本打不过索尼、任天堂

到手9袋!良品铺子坚果礼盒1440 仅44元包邮

每日讯息!教你用JavaScript实现背景图像滑动

户外运动有哪些项目?户外运动品牌排行榜

什么鱼营养价值最高?什么鱼只会逆流而上?

金木水火土命怎么算出来的?金木水火土哪个腿长?

玉面小飞龙是什么意思?玉面小飞龙出自哪里?

Redmi K60系列上架:三颗口碑最好的芯片都拿到了 12月27日发

每日聚焦:最快闪充旗舰!真我GT Neo5充电头曝光:支持240W充电

环球热讯:紫米裁员80%并入小米?官方澄清:ZMI品牌将继续存在

全球新资讯:9.99万元遭疯抢 五菱宏光MINI EV敞篷版下线:能跑280km

苯胺皮是什么皮?苯胺皮和纳帕皮有什么区别?

世界新动态:CloudCanal实战-五分钟搞定Oracle到StarRocks数据迁移与同步

(一)elasticsearch 编译和启动

【速看料】马斯克辞任CEO,产品经理如何用项目协作软件武装自己?

焦点速讯:字节鏖战美团的关键一役

重点聚焦!糗事百科宣布将关闭服务 自侃“享年17岁”

全球观点:神似苹果AirPower!特斯拉推出无线充电板:最高功率15W

手慢无 民族品牌两面针牙膏大促:四支到手20元还送牙刷

又一新能源品牌官宣涨价:最少涨5千 今年买车还剩最后一周“窗口期”

全球速看:盘点适合《战神》奎爷的演员:道恩·强森、杰森·莫玛等

新型复兴号CR200J首次亮相:Wi-Fi全覆盖 充电插口增加

环球微动态丨比亚迪DM-i再外放 东风小康风光蓝电E5官图发布:综合续航1150km

霍乱疫情卷土重来:已致马拉维国410人死亡

环球今热点:随身咖啡馆 精神X小时:Nevercoffee咖啡1.99元(京东5元)

天天微头条丨什么是 HTML5?

每日消息!Ubuntu:Docker 容器操作

天天关注:苹果降低中国工厂依赖:真要搬走?iPhone 14制造难度降低

全球聚焦:不装了!日本万亿重新发展核能:新一代核反应堆准备中

【热闻】冬至湖南浏阳全城燃放烟花 满城烟花一河诗画:网友羡慕哭

焦点简讯:顺丰又上热搜!买Chanel耳钉顺丰运掉五颗珍珠

焦点热门:修复RX 7900显卡功耗异常 AMD新驱动实测:有用 但没什么大用

天天简讯:比iPhone 14 Pro Max更轻更便宜 OPPO Find N2首销:7999元

4插槽怪兽 华硕、猫头鹰合作打造最安静、最冷静的RTX 4090/4080显卡

动态:5.2万亿财富没了 特斯拉股东喊话马斯克:别只顾着推特了

世界微速讯:小岛秀夫:只有Xbox懂我

天天通讯!本田思域Type R各国/地区售价曝光 在日本才卖20多万?

每日短讯:负债585.68亿:国美获黄光裕公司三笔贷款累计5亿港元

全球快看点丨新能源车国补退场倒计时!车企打响价格战:现金立减、保险补贴

时隔半年 终于不寂寞!讯景发布全球第二款RX 6700

中国哪里的羊肉最好吃?这5个地方 你最爱谁?

后壳质感堪比玉石!vivo S16 Pro图赏

微软重构资源管理器进程:Windows 11运行速度大提升

支付宝接入技术

Python requests库指定IP请求,并使用HTTPS证书验证

世界今热点:MAUI新生4.5-字体图像集成Font&Image

精彩看点:Codeforces 1654 G Snowy Mountain 题解 (重心分治)

美国遭史上最严重禽流感疫情:鸡蛋价格创纪录 真吃不起节奏

环球速看:FreeSWITCH学习笔记:Lua脚本

每日短讯:剪映上线团队剪辑“神技”:异地多端一起剪视频成为可能

3299元起 vivo S16 Pro手机发布:首发双面柔光人像拍摄

环球信息:童年的味道 大白兔奶糖促销:1斤20元到手

环球聚焦:自拍绝了!vivo发布新机S16e:2099元起、行业首创“玉质玻璃”工艺

软链接和硬链接

世界热消息:渗透实录-02

雷军宣布小米人事调整:总裁王翔退休 卢伟冰晋升

环球热消息:特斯拉北美大降价5.2万 超高折扣只为保住销量?

vivo S16系列亮相:标准版搭载骁龙870 Pro版搭载天玑8200

vivo S16系列出厂预装OriginOS 3 虚拟内存提升8G

vivo S16系列7.36mm机身塞进4600mAh:苹果iPhone都没做到

快资讯:教你用JavaScript实现鼠标特效

【天天聚看点】男子开宝马专挑外地牌照车碰瓷 套路防不胜防:扔石头制造声响

迪士尼神话剧《美生中国人》新剧照:杨紫琼饰演观音 吴彦祖变身孙悟空

环球滚动:FIFA年终国家队排名:国足降至第80 美加墨世界杯出线希望增加

【报资讯】RTX 40系列移动显卡参数曝光:价格可不低

世界通讯!一加首款键盘曝光:全铝机身、自带USB-C/A接口

当前动态:基于Netty的IM聊天加密技术学习:一文理清常见的加密概念、术语等

JDK源码分析实战系列-PriorityBlockingQueue

资讯:Altium Designer v23.0.1.38图文详解

焦点热议:历史总是惊人地相似:复古主机Atari VCS宣告停产 一个时代终结

网友称考研民宿房费暴涨近20倍 店家:每年都一样

中国企业站稳全球LCD市场!李东生:TCL部分技术领先三星

消息!超可爱!《王者荣耀》梦奇赛年皮肤来了 特效贼棒

环球热讯:《王者荣耀》《合金弹头》联动:联名首发新英雄莱西奥

【世界播资讯】高能吸水 洁丽雅纯棉毛巾:15.9元/3条

今日报丨Intel显卡事业部突然解散!掌门人Raja回归首席架构师

全球新消息丨国内油价要止步“三连跌”!元旦后或迎新一轮价格上调

世界微头条丨比iPhone 14 Pro Max还轻 OPPO Find N2明天首销:7999元

Go 快速入门指南 - 环境安装

环球快看:什么是 HTML?

热讯:基础可视化图表之堆叠条形图

环球新动态:window系统增强优化工具

世界今热点:智创万物,数赢未来——如何助推数智时代的发展浪潮

每日时讯!入口脆甜 林家铺子乌龙茶蜜桃罐头19.9元四罐

今日讯!5年了 网易云音乐终于撕下了“网抑云”标签

全球热资讯!羊被冻死牧羊犬贴身供暖试图唤醒 网友:边牧聪明又有情

每日速读!山东一地120和119到路口秒变绿灯 网友:建议全国推广

天天看点:腾讯智能车技术花样用 数万人疯狂点赞转发

世界消息!(笔记)PID算法讲解

低代码:让企业“活”起来,赋能企业数字转型

今日要闻!大四上 | 计算机综合课设答辩经验帖

一、【Java】多线程与高并发

世界热资讯!小米史上最强!雷军确认小米13 Pro支持Wi-Fi 7:国内认证后开放

全球最资讯丨今天突然发现谷歌翻译用不了,发现是谷歌域名解析问题,现提供以下方法解决

快报:高帧畅玩《巫师3》!满血3060游戏本华硕天选3双旦入手7599 性价比超高

天天热头条丨豆瓣评分跌至6.3!《三体》动画播放量破2亿